By the end of this course, you will be able to:

Before understanding chain-of-thought prompting, you need to understand the method it improves upon. Few-shot prompting is the idea that you can teach a language model a new task just by showing it examples – no retraining required.

Think about how a child learns a new game. You don’t explain every rule abstractly. Instead, you play a round in front of them: “Watch – I draw a card, add the numbers, and say the total.” After watching a few rounds, the child can play too. They learned the pattern from demonstrations, not from a manual.

Few-shot prompting works the same way. You place a handful of input-output examples (called “exemplars”) at the beginning of the text you send to the model. The model then continues the pattern for a new input. This approach was popularized by GPT-3 (see Improving Language Understanding by Generative Pre-Training) and became the standard way to use large language models without fine-tuning.

Here is a concrete 2-shot prompt for a question-answering task:

Q: What is the capital of France?

A: Paris.

Q: What is the capital of Japan?

A: Tokyo.

Q: What is the capital of Brazil?

A:The model sees two complete question-answer pairs, then a new question with an incomplete answer. Because it generates text by predicting the most likely next token given everything before it, it continues in the established pattern and produces “Brasilia.”

Formally, an autoregressive language model generates tokens one at a time. Each new token’s probability depends on everything that came before it. Given \(k\) exemplars and a test input, the model generates:

\[P(y \mid x_{\text{test}}, \{(x_i, y_i)\}_{i=1}^{k})\]

where:

This is the key property: the model’s behavior changes based solely on what text appears in the prompt. Different exemplars produce different behaviors from the same frozen model.

Suppose we have a simple factual question task. We construct a 3-shot prompt:

Q: How many legs does a spider have?

A: 8.

Q: How many planets are in the solar system?

A: 8.

Q: How many sides does a hexagon have?

A:The model processes this token by token. After seeing three examples where questions are answered with a single number, the pattern is clear. The model generates “6.” – matching the format (a number followed by a period) and providing the correct answer.

Now consider what happens if we use zero-shot prompting (no examples):

Q: How many sides does a hexagon have?Without examples establishing the “short answer” format, the model might generate a paragraph: “A hexagon is a six-sided polygon. The word comes from the Greek hex meaning six…” – this is a plausible continuation of internet text, but not the concise answer we wanted. The few-shot exemplars constrain the model to follow a specific response pattern.

Recall: What is the difference between few-shot prompting and fine-tuning? Which one changes the model’s parameters?

Apply: Write a 3-shot prompt that teaches a model to translate English to French. Your prompt should include three English-French pairs, followed by a new English sentence for the model to translate.

Extend: Few-shot prompting works well for factual

questions but poorly for multi-step math problems. Why might showing the

model Q: ... A: 42 fail to help when the answer requires

several intermediate calculations? Think about what information is

missing from the exemplar.

Standard few-shot prompting works well for tasks where the answer can be produced in one mental step – factual recall, classification, simple translation. But it breaks down on tasks that require chaining multiple reasoning steps together. This lesson examines why.

Imagine you’re helping a friend solve a word problem over text message, but you’re only allowed to send the final number – no work shown. Your friend sees:

Problem: A store has 3 shelves with 8 books each. 5 books are sold. How many remain?

Answer: 19.Even if you send five such examples, your friend sees only inputs and outputs. They never see the intermediate reasoning: “3 shelves times 8 books = 24 total. 24 minus 5 sold = 19.” Without that bridge from question to answer, your friend has to figure out the entire reasoning chain from scratch every time.

Language models face the same problem. When you show them question-answer pairs for math word problems, each exemplar is a complete input mapped directly to a final number. The model must somehow infer the multi-step reasoning required – but the exemplars give no evidence of what those steps look like.

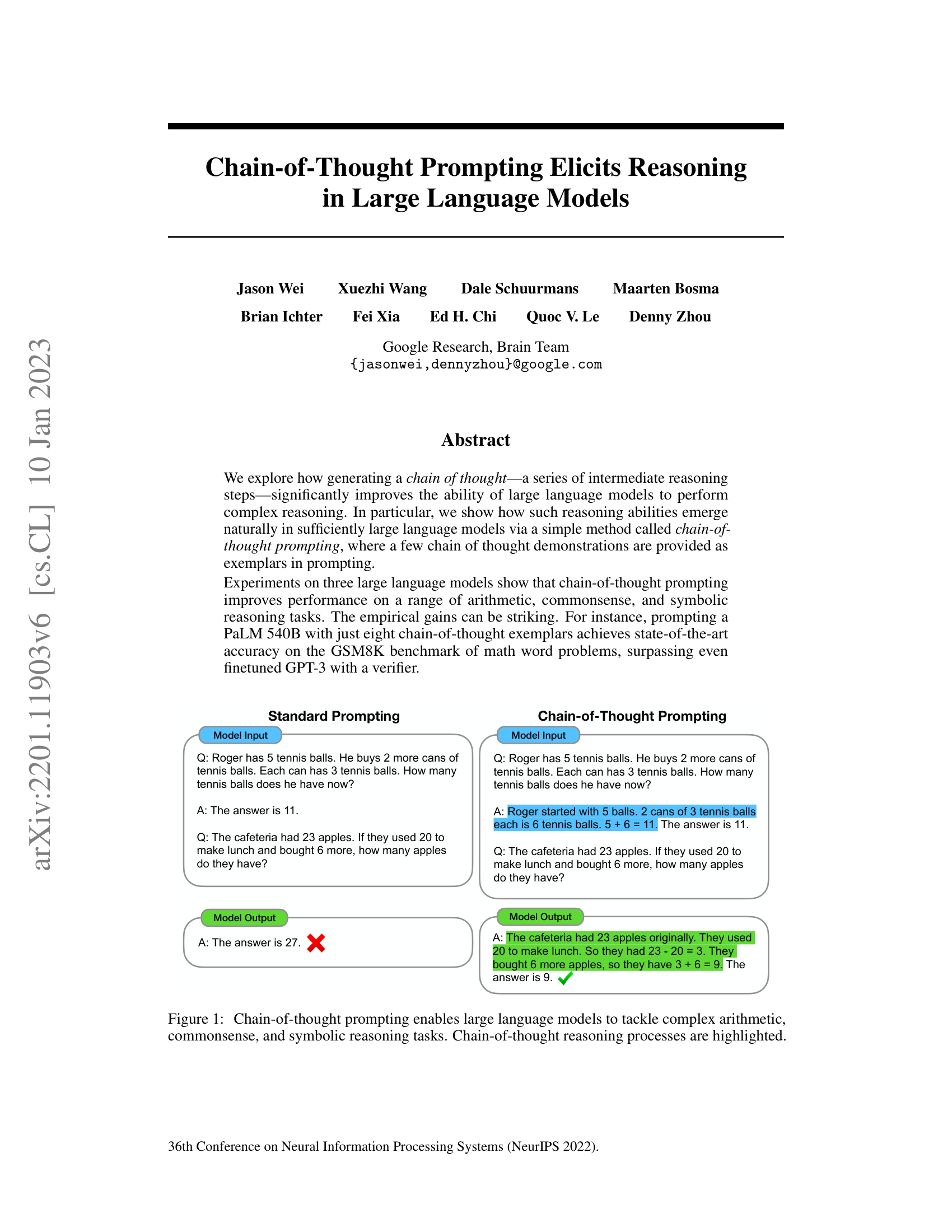

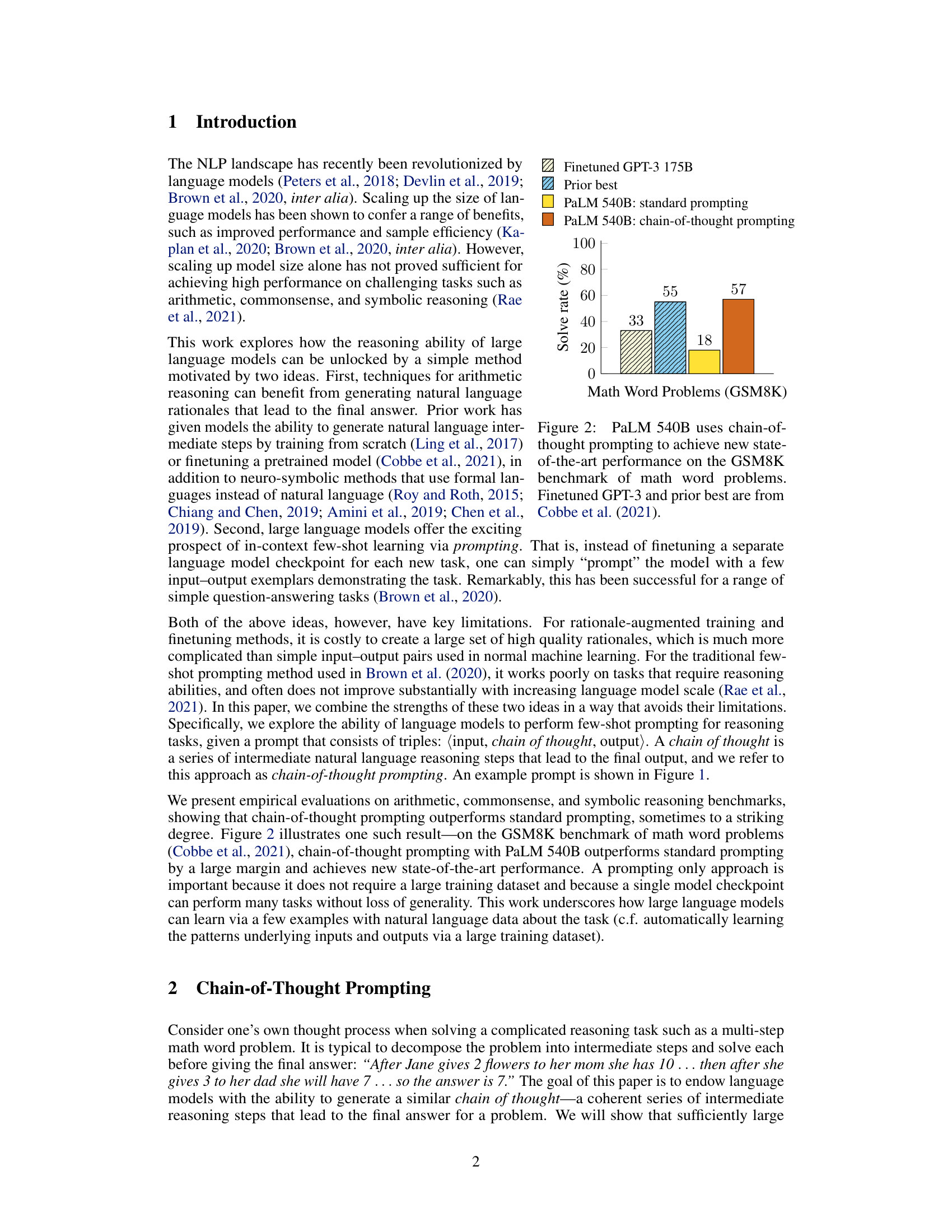

Consider this standard prompt applied to a word problem:

Q: Roger has 5 tennis balls. He buys 2 more cans of

tennis balls. Each can has 3 tennis balls. How many

tennis balls does he have now?

A: The answer is 11.

Q: The cafeteria had 23 apples. If they used 20 to make

lunch and bought 6 more, how many apples do they have?

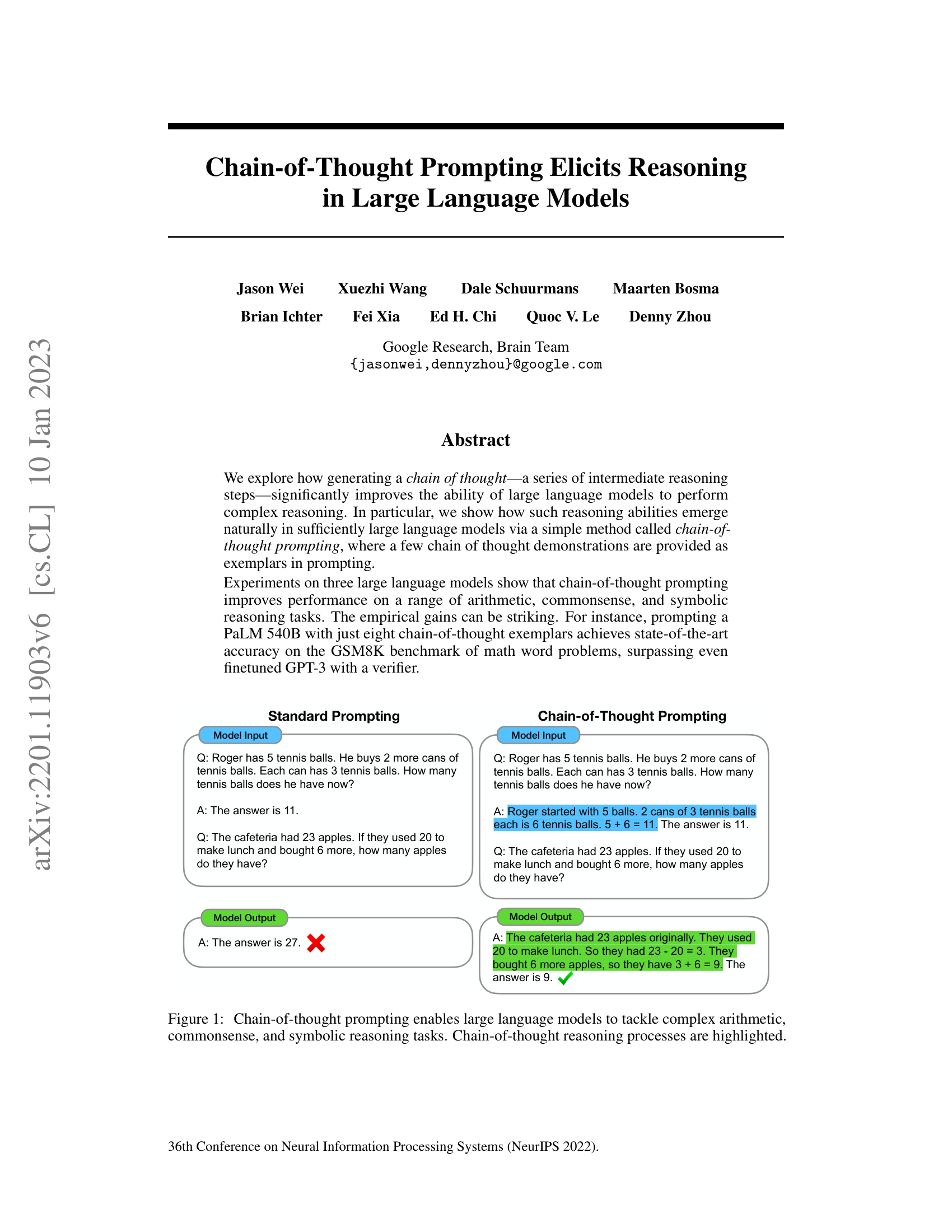

A:With standard prompting, a model like PaLM 540B (Pathways Language Model, a large language model from Google) answers: “The answer is 27.” That’s wrong – the correct answer is 9 (23 - 20 + 6). The model learned the format from the exemplars (start with “The answer is”) but failed at the reasoning.

This isn’t a knowledge gap. The model “knows” how to subtract and add. The problem is structural: the model must generate the final answer directly from the question, without any intermediate computation. For a problem requiring 3 steps, the model must somehow perform all 3 steps internally in a single forward pass (one complete run of the input through all of the network’s layers), then produce the correct number as the very next token.

The empirical evidence is stark. On GSM8K, a benchmark of grade-school math word problems requiring 2-8 reasoning steps, PaLM 540B with standard prompting scores only 17.9%. For comparison, the same model scores over 90% on single-step arithmetic. The gap widens as problems get harder – more reasoning steps means more internal computation the model must perform “silently.”

This failure mode is not unique to math. Commonsense reasoning (“Would a pear sink in water?” requires knowing pear density vs. water density and applying the density comparison rule), symbolic manipulation (“Take the last letters of the words in ‘Amy Brown’ and concatenate them” requires processing each word separately then combining results), and logical deduction all require multi-step reasoning that standard prompting cannot reliably elicit.

Let’s trace through why standard prompting fails on a specific problem. The test question is:

Q: Beth has 4 packs of crayons. Each pack has 10 crayons.

She gave away 6 crayons. How many does she have now?The correct reasoning requires three steps:

With standard prompting, the model sees exemplars like

Q: [problem] A: The answer is [number]. It must jump from

the question directly to “The answer is 34” without writing down any

intermediate work. The model might instead:

Each error happens because the model must compress all reasoning into the hidden states (the internal numerical vectors the network passes between its layers) of a single forward pass. There is no “scratch space” for intermediate results.

Recall: Why does standard few-shot prompting fail on multi-step reasoning tasks? What is missing from the exemplars?

Apply: For the following problem, identify the distinct reasoning steps required and explain why a model answering directly (without intermediate steps) might fail: “A farmer has 3 fields. Each field has 12 rows of corn. Each row produces 8 ears. Foxes ate 15 ears. How many ears remain?”

Extend: If the issue is that models can’t perform multi-step reasoning in a single forward pass, could we solve it by simply making the model larger (more parameters)? The paper reports that PaLM 540B with standard prompting scores 17.9% on GSM8K, while a fine-tuned GPT-3 with a verifier scores 55%. What does this tell you about the relationship between model size and reasoning ability?

This is the paper’s key contribution. The idea is disarmingly simple: instead of showing the model just the question and the answer, show it the reasoning steps between the question and the answer. This single change – adding natural language reasoning to the exemplars – transforms the model’s ability to solve multi-step problems.

Go back to the text-message analogy from Lesson 2. This time, instead of sending only the final answer, you show your work:

Problem: A store has 3 shelves with 8 books each. 5 books

are sold. How many remain?

Answer: The store has 3 shelves with 8 books each, so

that's 3 * 8 = 24 books total. After selling 5,

there are 24 - 5 = 19 books remaining. The

answer is 19.Now your friend can see how you moved from the question to the answer. When they encounter a new problem, they can mimic your step-by-step approach.

Chain-of-thought (CoT) prompting does exactly this. Each exemplar in the prompt is augmented with a “chain of thought” – a sequence of intermediate natural language reasoning steps that connect the input to the output. The model then mimics this pattern, producing its own chain of thought before arriving at an answer.

Here is the full comparison from the paper. Standard prompting:

Q: Roger has 5 tennis balls. He buys 2 more cans of

tennis balls. Each can has 3 tennis balls. How many

tennis balls does he have now?

A: The answer is 11.

Q: The cafeteria had 23 apples. If they used 20 to make

lunch and bought 6 more, how many apples do they have?

A: The answer is 27.The model answers 27, which is wrong. Now with chain-of-thought prompting:

Q: Roger has 5 tennis balls. He buys 2 more cans of

tennis balls. Each can has 3 tennis balls. How many

tennis balls does he have now?

A: Roger started with 5 balls. 2 cans of 3 tennis balls

each is 6 tennis balls. 5 + 6 = 11. The answer is 11.

Q: The cafeteria had 23 apples. If they used 20 to make

lunch and bought 6 more, how many apples do they have?

A: The cafeteria had 23 apples originally. They used 20

to make lunch. So they had 23 - 20 = 3. They bought

6 more apples, so they have 3 + 6 = 9. The answer is 9.The model now produces the correct answer (9) by first generating its own reasoning chain.

Formally, standard prompting conditions the output on exemplar pairs \((x_i, y_i)\):

\[P(y \mid x_{\text{test}}, \{(x_i, y_i)\}_{i=1}^{k})\]

Chain-of-thought prompting replaces each output \(y_i\) with a pair \((c_i, a_i)\) where \(c_i\) is the chain of thought and \(a_i\) is the final answer:

\[P(c, a \mid x_{\text{test}}, \{(x_i, c_i, a_i)\}_{i=1}^{k})\]

where:

The critical mechanism is autoregressive generation: the model produces tokens left-to-right, so it generates the chain of thought \(c\) before the answer \(a\). This means the answer is conditioned on the reasoning steps. The intermediate tokens act as “scratch space” – they give the model room to perform computation step by step rather than all at once.

Three important properties make this approach powerful:

The authors constructed just 8 exemplars (each manually written with a chain of thought) and used this single set across five arithmetic benchmarks. They did not perform prompt engineering – the exemplars were written once and used as-is.

Let’s construct a chain-of-thought prompt from scratch for a simple arithmetic task and trace how the model processes it.

Task: Solve word problems about money.

Step 1: Write 2 exemplars with chains of thought.

Q: Maria has $15. She buys 3 notebooks at $2 each.

How much money does she have left?

A: Maria started with $15. She bought 3 notebooks at

$2 each, so she spent 3 * 2 = $6. She has

15 - 6 = $9 left. The answer is 9.

Q: Tom earns $8 per hour. He works for 5 hours and

then spends $12 on lunch. How much does he have?

A: Tom earns 8 * 5 = $40 from working. After spending

$12 on lunch, he has 40 - 12 = $28. The answer is 28.Step 2: Append the test question.

Q: Sara has $20. She buys 2 books at $4 each and a

pen for $3. How much does she have left?

A:Step 3: Trace the model’s generation. The model has learned the pattern: restate the starting amount, compute each purchase, subtract from the total. It generates:

A: Sara started with $20. She bought 2 books at $4 each,

so she spent 2 * 4 = $8 on books. She also bought a

pen for $3. In total she spent 8 + 3 = $11. She has

20 - 11 = $9 left. The answer is 9.Notice how the model followed the pattern from the exemplars: restate the starting condition, compute intermediate values with explicit arithmetic, then give the final answer. Each generated token becomes part of the context for the next token, so the “8” computed in “2 * 4 = $8” is available when computing “8 + 3 = $11”.

Recall: What are the three components of each exemplar in a chain-of-thought prompt? How does this differ from standard few-shot prompting?

Apply: Write a 2-shot chain-of-thought prompt for the following task: determining whether a sentence about sports is plausible. Use these two exemplars: (1) “LeBron James scored a touchdown” (implausible – he’s a basketball player) and (2) “Serena Williams hit an ace” (plausible – she’s a tennis player). Then show the test question: “Wayne Gretzky hit a home run.”

Extend: The paper reports that the exact wording of the chain of thought matters less than you might expect – three different annotators wrote different reasoning chains for the same exemplars, and all substantially outperformed standard prompting. Why might the structure of reasoning (step-by-step decomposition) matter more than the wording (specific sentences)?

Chain-of-thought prompting doesn’t always work. In fact, for small models, it makes performance worse. The paper’s most striking finding is that chain-of-thought reasoning is an “emergent ability” – it appears suddenly at a specific model scale rather than improving gradually.

Consider learning to ride a bicycle. A child might practice for weeks with training wheels, making slow progress. Then one day the training wheels come off, and within minutes they’re riding freely. The skill doesn’t emerge in proportion to practice time – it appears suddenly once enough foundational coordination is in place.

Emergent abilities in language models work similarly. As you scale up a model (add more parameters and train on more data), most capabilities improve gradually – translation gets slightly better, summarization improves incrementally. But some capabilities show a sharp transition: they’re effectively absent below a threshold and then appear suddenly above it.

The paper tested chain-of-thought prompting across models ranging from 422 million to 540 billion parameters, spanning five model families: GPT-3 (OpenAI), LaMDA (Language Model for Dialogue Applications, Google), PaLM (Google), UL2 (Unified Language Learner, a model trained on a mixture of pre-training objectives, Google), and Codex (a GPT-3 variant fine-tuned on code, OpenAI). The results reveal a clear pattern that can be described as:

\[\Delta(N) \approx \begin{cases} \leq 0 & \text{if } N < 10^{11} \\ > 0 \text{ (and increasing)} & \text{if } N \geq 10^{11} \end{cases}\]

where:

Below approximately 100 billion parameters, \(\Delta(N) \leq 0\) – chain-of-thought prompting provides no benefit, and sometimes hurts. Above this threshold, \(\Delta(N) > 0\) and grows with scale.

What happens when small models attempt chain-of-thought reasoning? They produce fluent but logically incoherent text. A small model might generate: “Roger started with 5 balls. He bought 2 cans. 2 + 5 = 12. The answer is 12.” The sentence structure looks correct, but the arithmetic is nonsensical. The model has learned to imitate the format of reasoning without being able to perform the reasoning. This actually hurts performance – the incoherent chain of thought leads to worse answers than just guessing directly.

The concrete numbers from the paper are dramatic. On GSM8K:

| Model | Parameters | Standard | CoT | \(\Delta\) |

|---|---|---|---|---|

| LaMDA | 422M | 2.2% | 1.1% | -1.1 |

| LaMDA | 2B | 3.1% | 2.6% | -0.5 |

| LaMDA | 8B | 4.1% | 5.0% | +0.9 |

| LaMDA | 68B | 8.3% | 10.4% | +2.1 |

| LaMDA | 137B | 14.3% | 17.1% | +2.8 |

| PaLM | 8B | 4.1% | 2.3% | -1.8 |

| PaLM | 62B | 16.5% | 21.4% | +4.9 |

| PaLM | 540B | 17.9% | 56.9% | +39.0 |

The jump from PaLM 62B (+4.9) to PaLM 540B (+39.0) is extraordinary. Performance doesn’t scale linearly – it accelerates. The 540B model is roughly 9x larger than the 62B model, but the CoT benefit is roughly 8x larger.

This pattern – an emergent threshold followed by accelerating returns – has a practical consequence: you cannot predict whether chain-of-thought prompting will work for your task by testing on a smaller, cheaper model. A small model might show zero benefit or even harm, while the same prompt on a sufficiently large model produces dramatic improvements.

Let’s trace what happens when models of different sizes encounter the same chain-of-thought prompt. The test question:

Q: Beth bakes 4 batches of cookies. Each batch makes

12 cookies. She eats 3 and gives 5 to her neighbor.

How many cookies does she have?Small model (2B parameters) might generate:

A: Beth bakes 4 batches. Each batch has 12 cookies.

She eats 3 cookies. 4 + 12 = 18. She gives 5 to

her neighbor. 18 - 3 = 10. The answer is 10.The chain of thought is fluent but wrong: “4 + 12 = 18” doesn’t make sense (should be 4 * 12 = 48), and the subsequent steps don’t follow logically.

Large model (540B parameters) generates:

A: Beth bakes 4 batches of 12 cookies each, so she

makes 4 * 12 = 48 cookies. She eats 3, so she has

48 - 3 = 45. She gives 5 to her neighbor, so she

has 45 - 5 = 40. The answer is 40.Each step follows logically from the previous one. The intermediate values (48, 45, 40) are computed correctly and carried forward.

The small model has learned the syntax of chain-of-thought reasoning (restate, compute, conclude) but not the semantics (correct arithmetic, proper operation selection). The large model has both.

Recall: At roughly what parameter count does chain-of-thought prompting begin to help? What happens below this threshold?

Apply: You’re building a customer-facing application that uses an LLM to answer math questions from students. You have access to models with 7B, 70B, and 540B parameters. Based on the paper’s findings, which model(s) would you consider for chain-of-thought prompting, and why?

Extend: The paper shows that the CoT benefit is larger for harder tasks (GSM8K, which requires multi-step reasoning, gains +39 percentage points, while SingleOp, which requires one step, gains nearly nothing). Propose a hypothesis for why difficulty and CoT benefit are correlated. What does this suggest about what chain-of-thought is actually doing inside the model?

So chain-of-thought prompting dramatically improves reasoning at sufficient scale. But why does it work? Is it the natural language reasoning? The extra tokens? The equations? The paper runs a careful ablation study to isolate what actually matters.

When a scientist discovers that a drug cures a disease, the next question is: which ingredient is doing the work? You don’t just declare victory – you systematically remove or replace each component to find the active ingredient. This is an ablation study: remove parts of a method one at a time and measure what breaks.

The paper tests three alternative prompting strategies against full chain-of-thought prompting, each designed to test a specific hypothesis about why CoT works.

Hypothesis 1: “It’s the equations.” Maybe chain-of-thought works because it produces a mathematical equation that the model can then evaluate. To test this, the authors created an “equation only” variant where the exemplar’s chain of thought is replaced with just the mathematical equation:

Standard: Q: [problem] A: The answer is 11.

Equation: Q: [problem] A: 5 + (2 * 3) = 11. The answer is 11.

Full CoT: Q: [problem] A: Roger started with 5 balls. 2 cans

of 3 is 6. 5 + 6 = 11. The answer is 11.Result: Equation-only helps on simple 1-2 step problems (where the equation is easy to derive from the question), but fails on complex problems like GSM8K. The natural language reasoning is doing something that pure equations cannot – it bridges the semantic gap between the word problem and the math.

Hypothesis 2: “It’s the extra computation.” Maybe

the chain of thought just gives the model more tokens to “think” – more

forward passes of the network, more computation. The content of those

tokens might not matter. To test this, the authors created a “variable

compute only” variant where the chain of thought is replaced with a

sequence of dots (...) equal in length to the chain of

thought:

Variable compute: Q: [problem] A: .................. The answer is 11.

Full CoT: Q: [problem] A: Roger started with 5 balls. 2 cans

of 3 is 6. 5 + 6 = 11. The answer is 11.Result: Dots perform about the same as standard prompting. Extra tokens alone don’t help – the content of those tokens matters. The model isn’t benefiting from more computation time; it’s benefiting from the specific reasoning expressed in the intermediate text.

Hypothesis 3: “It’s knowledge activation.” Maybe the chain of thought simply primes the model to access relevant knowledge stored during pretraining, and the sequential reasoning doesn’t actually matter. To test this, the authors placed the chain of thought after the answer instead of before it:

After answer: Q: [problem] A: The answer is 11. Roger started with

5 balls. 2 cans of 3 is 6. 5 + 6 = 11.

Full CoT: Q: [problem] A: Roger started with 5 balls. 2 cans

of 3 is 6. 5 + 6 = 11. The answer is 11.Result: Reasoning after the answer performs about the same as standard prompting. This is the most revealing ablation. The chain of thought must come before the answer because the model generates tokens left-to-right. When reasoning comes first, the answer is conditioned on the reasoning tokens. When reasoning comes after, the answer was already generated – the reasoning is just post-hoc narration that doesn’t influence the output.

The ablation results on GSM8K for PaLM 540B:

| Prompting Strategy | GSM8K Accuracy |

|---|---|

| Standard prompting | 17.9% |

| Equation only | ~22% |

| Variable compute only (dots) | ~18% |

| Reasoning after answer | ~19% |

| Chain-of-thought prompting | 56.9% |

Only the full chain-of-thought prompting – natural language reasoning steps placed before the answer – produces the dramatic improvement. This tells us that all three properties are essential: (1) the reasoning must be in natural language (not just equations), (2) the content of the tokens matters (not just the count), and (3) the reasoning must precede the answer (not follow it).

Let’s design our own mini-ablation for a symbolic reasoning task: last letter concatenation. The test question is: “Take the last letters of the words in ‘Amy Brown’ and concatenate them.”

Standard prompting exemplar:

Q: Take the last letters of "Elon Musk" and concatenate them.

A: The answer is nk.The model must somehow extract last letters and concatenate them in one step. It might guess “nk” for common names but fail on unusual ones.

Equation-only exemplar (there is no equation for string manipulation – this hypothesis doesn’t even apply to symbolic tasks):

Q: Take the last letters of "Elon Musk" and concatenate them.

A: last("Elon") + last("Musk") = nk. The answer is nk.Variable compute exemplar:

Q: Take the last letters of "Elon Musk" and concatenate them.

A: .... .... .... .... The answer is nk.Chain-of-thought exemplar:

Q: Take the last letters of "Elon Musk" and concatenate them.

A: The last letter of "Elon" is "n". The last letter of

"Musk" is "k". Concatenating them is "nk". The answer is nk.Now for the test question (“Amy Brown”), only the full CoT version explicitly demonstrates the process: isolate each word, extract its last letter, then combine. The model can replicate this process step by step: “The last letter of ‘Amy’ is ‘y’. The last letter of ‘Brown’ is ‘n’. Concatenating them is ‘yn’. The answer is yn.”

With PaLM 540B, the paper reports 99.4% accuracy on this task with chain-of-thought prompting versus 2.1% with standard prompting for in-domain (2-word names). The chain-of-thought approach also generalizes to out-of-domain inputs: 94.8% accuracy on 4-word names, despite only seeing 2-word exemplars. The step-by-step structure transfers to longer inputs because the model learns the procedure (extract last letter of each word, concatenate) rather than memorizing specific answers.

Recall: Name the three ablation variants tested in the paper and what hypothesis each one was designed to test.

Apply: A colleague argues: “Chain-of-thought prompting works because the model just needs more tokens to compute the answer – the actual words don’t matter.” Design an experiment to disprove this claim. What prompting variant would you test, and what result would you expect?

Extend: The “reasoning after answer” ablation shows that the order of tokens matters – reasoning must come before the answer. This is a direct consequence of autoregressive generation. Could you design a model architecture where reasoning after the answer would help? What would need to change about how the model generates text?

Chain-of-thought prompting requires no model training or fine-tuning. What are the advantages and disadvantages of this compared to approaches that fine-tune models on datasets of reasoning problems with worked solutions (like Cobbe et al., 2021)?

The paper uses 8 exemplars for most benchmarks and uses greedy decoding (always picking the most probable next token). Why might the authors have chosen greedy decoding instead of sampling? What risks would sampling introduce for a reasoning task?

On GSM8K, the paper reports that 46% of incorrect answers had “almost correct” chains of thought (one small error in arithmetic or one missing step), while 54% had major errors in semantic understanding. What does this breakdown tell you about the types of mistakes the model makes? How might you design a follow-up method to address each error type?

The paper acknowledges that chain-of-thought prompting does not prove the model is “actually reasoning” – it might be pattern-matching against similar reasoning chains from its training data. How would you design an experiment to test whether the model is genuinely reasoning vs. pattern-matching? What kind of problems would distinguish these two explanations?

This paper directly enabled two important follow-up techniques: self-consistency (Wang et al., 2022 – sampling multiple chains of thought and taking the majority answer) and zero-shot chain-of-thought (Kojima et al., 2022 – appending “Let’s think step by step” without any exemplars). How does each of these methods address a specific limitation of the original chain-of-thought prompting approach?

Build a chain-of-thought reasoning simulator that decomposes multi-step arithmetic word problems into intermediate steps, solving them one at a time – demonstrating why step-by-step decomposition succeeds where direct answering fails.

You will implement two “solvers” for arithmetic word problems represented as sequences of operations:

Each problem is represented as a starting value and a sequence of operations. The direct solver adds noise to simulate the error introduced by computing everything at once (more operations = more accumulated error). The CoT solver processes one step at a time with much lower per-step noise.

This models the paper’s key finding: direct answering fails on multi-step problems while step-by-step reasoning succeeds, and the gap widens as problems get harder.

import numpy as np

# === Problem representation ===

# A problem is a starting value and a list of (operation, operand) tuples.

# Operations: "add", "sub", "mul"

# Example: start=5, ops=[("mul", 3), ("sub", 2)] means 5*3 - 2 = 13

def make_problem(start, ops):

"""Create a problem dict with the ground-truth answer."""

value = start

steps = [f"Start with {start}"]

for op, operand in ops:

if op == "add":

value = value + operand

steps.append(f"{value - operand} + {operand} = {value}")

elif op == "sub":

value = value - operand

steps.append(f"{value + operand} - {operand} = {value}")

elif op == "mul":

value = value * operand

steps.append(f"{value // operand} * {operand} = {value}")

return {"start": start, "ops": ops, "answer": value, "steps": steps}

def solve_direct(problem, rng, noise_per_step=5.0):

"""

Simulate a model answering directly without intermediate steps.

Error accumulates with problem complexity (number of steps).

TODO: Compute the correct answer, then add Gaussian noise

scaled by the number of steps. The idea: more steps = more

internal computation required = more accumulated error.

Return: (predicted_answer_rounded_to_int, chain_of_thought=None)

"""

# TODO: implement

pass

def solve_cot(problem, rng, noise_per_step=0.5):

"""

Simulate a model reasoning step-by-step with chain of thought.

Each step has small independent noise (errors don't compound

as badly because each step is grounded by the previous result).

TODO: Process each operation one at a time. For each step,

compute the correct result and add small Gaussian noise.

Round to int after each step (simulating discrete token output).

Build up a chain-of-thought string showing each step.

Return: (predicted_answer, chain_of_thought_string)

"""

# TODO: implement

pass

def evaluate(problems, solver_fn, rng, n_trials=50):

"""

Run the solver on each problem multiple times and compute accuracy.

A prediction is correct if it exactly matches the ground-truth answer.

TODO: For each problem, run the solver n_trials times.

Compute accuracy = (number of exact matches) / n_trials.

Return a list of accuracies, one per problem.

"""

# TODO: implement

pass

def run_experiment():

"""Compare direct vs CoT solving across problem difficulties."""

rng = np.random.default_rng(42)

# Create problems of increasing difficulty (1 to 6 steps)

problems = [

make_problem(10, [("add", 5)]), # 1 step

make_problem(8, [("mul", 3), ("sub", 4)]), # 2 steps

make_problem(15, [("sub", 5), ("mul", 2), ("add", 7)]), # 3 steps

make_problem(6, [("mul", 4), ("add", 10), ("sub", 3), ("mul", 2)]), # 4 steps

make_problem(12, [("sub", 2), ("mul", 3), ("add", 8), ("sub", 5),

("mul", 2)]), # 5 steps

make_problem(5, [("mul", 3), ("add", 7), ("sub", 2), ("mul", 4),

("sub", 10), ("add", 6)]), # 6 steps

]

print("Problem Difficulty vs. Accuracy")

print("=" * 55)

print(f"{'Steps':<8}{'Answer':<10}{'Direct':<12}{'CoT':<12}{'Gap':<10}")

print("-" * 55)

direct_accs = evaluate(problems, solve_direct, rng)

cot_accs = evaluate(problems, solve_cot, rng)

for i, prob in enumerate(problems):

n_steps = len(prob["ops"])

gap = cot_accs[i] - direct_accs[i]

print(f"{n_steps:<8}{prob['answer']:<10}{direct_accs[i]:<12.1%}"

f"{cot_accs[i]:<12.1%}{gap:<+10.1%}")

print()

print("Example chain of thought for 4-step problem:")

_, cot_trace = solve_cot(problems[3], rng)

print(cot_trace)

if __name__ == "__main__":

run_experiment()Problem Difficulty vs. Accuracy

=======================================================

Steps Answer Direct CoT Gap

-------------------------------------------------------

1 15 82.0% 100.0% +18.0%

2 20 56.0% 98.0% +42.0%

3 27 38.0% 96.0% +58.0%

4 62 24.0% 94.0% +70.0%

5 61 12.0% 92.0% +80.0%

6 76 8.0% 90.0% +82.0%

Example chain of thought for 4-step problem:

Step 1: 6 * 4 = 24

Step 2: 24 + 10 = 34

Step 3: 34 - 3 = 31

Step 4: 31 * 2 = 62

Final answer: 62The exact percentages will vary with the random seed, but the pattern should be clear: direct accuracy drops sharply as problems get harder (more steps), while CoT accuracy remains high. The gap between them widens with difficulty – matching the paper’s finding that “chain-of-thought prompting has larger performance gains for more-complicated problems.”