By the end of this course, you will be able to:

Every large language model has a hard limit on how much text it can “see” at once. This limit, called the context window, determines how many tokens (roughly, words and word-pieces) the model processes in a single pass. Everything outside this window is invisible – the model cannot reason about information it cannot see. Understanding this constraint is the foundation for understanding why MemGPT exists.

Think about reading a book through a mail slot. You can slide the book behind the slot and read whatever section is visible, but you cannot see the rest of the book at the same time. If someone asks you about a paragraph that has scrolled past the slot, you have no way to answer – that text is gone from your view. The mail slot is the context window.

In a transformer-based language model (see Attention Is All You Need), the self-attention mechanism computes relationships between every pair of tokens in the input. The computational cost of this operation scales quadratically with the sequence length:

\[\text{Cost} \propto n^2 \cdot d\]

where:

Doubling \(n\) quadruples the compute and memory cost. This is why models have fixed context windows rather than processing arbitrarily long inputs.

The paper provides a concrete table of context window sizes as of early 2024. The approximate number of conversational messages that fit in a context window of \(C\) tokens is:

\[\text{Messages} \approx \frac{C - 1000}{50}\]

where:

This formula reveals the practical limits: with GPT-4’s \(C = 8{,}192\) tokens, you get about 140 messages. With Llama 2’s \(C = 4{,}096\), about 60 messages. A long customer support session or a multi-day conversation easily exceeds these limits.

But the problem is worse than raw capacity suggests. Research by Liu et al. (2023) showed that even when models have large contexts, they struggle to use information placed in the middle of the window – a phenomenon called “lost in the middle” (see Lost in the Middle). Models perform best on information at the very beginning or very end of their context and worst on information in the middle. Simply making the window bigger does not solve the problem.

Let’s calculate message capacities for several models.

GPT-4 with \(C = 8{,}192\) tokens:

\[\text{Messages} = \frac{8192 - 1000}{50} = \frac{7192}{50} = 143.8 \approx 140\]

Llama 2 with \(C = 4{,}096\) tokens:

\[\text{Messages} = \frac{4096 - 1000}{50} = \frac{3096}{50} = 61.9 \approx 60\]

GPT-4 Turbo with \(C = 128{,}000\) tokens:

\[\text{Messages} = \frac{128000 - 1000}{50} = \frac{127000}{50} = 2{,}540 \approx 2{,}600\]

Now consider a real scenario. A legal annual report (SEC Form 10-K) can exceed 1,000,000 tokens. Even GPT-4 Turbo’s 128k window can hold at most 12.8% of that document. And the “lost in the middle” finding tells us that even the portion inside the window will not be processed uniformly – the model will attend more to the beginning and end, potentially missing critical information in the middle.

For conversational agents, 140 messages is about 70 back-and-forth exchanges – maybe 30 minutes of active chatting. A virtual companion that forgets everything after half an hour is not very useful.

Recall: Why does doubling the context window size more than double the computational cost? What mathematical relationship governs this?

Apply: A customer support chatbot uses a model with a 16,000-token context window. The system prompt uses 2,000 tokens (it includes company policies and instructions). The average customer message is 30 tokens, and the average bot response is 80 tokens. How many complete exchanges (one customer message plus one bot response) fit in the remaining context?

Extend: The “lost in the middle” finding suggests that simply increasing context length has diminishing returns for retrieval tasks. If you had a 1,000,000-token context window, what problems would you still face when trying to analyze a document that fits entirely within it? Think about attention patterns and computational cost.

MemGPT’s core insight comes from a different field entirely: operating systems. In the 1960s, computer scientists solved a problem remarkably similar to the context window bottleneck – physical memory (RAM) was too small to hold all running programs. Their solution, virtual memory, created the illusion of unlimited memory by intelligently swapping data between fast RAM and slow disk storage. This lesson teaches the OS concepts that MemGPT adapts.

Imagine you are a chef working at a small kitchen counter. You can only fit three cutting boards on the counter at once, but you have twenty dishes to prepare. You handle this by keeping the three dishes you are actively working on at the counter (main memory) and storing the rest of your ingredients on shelves across the room (disk storage). When you need to start a new dish, you clear one cutting board – saving any partially prepared ingredients back to the shelf – and bring the new dish’s ingredients to the counter.

This is exactly how virtual memory works in an operating system. The key terms are:

Main memory (RAM): The fast, limited workspace. Programs currently being executed live here. The CPU can read and write to RAM in nanoseconds.

Disk storage: The slow, large archive. Data that is not currently needed lives here. Reading from disk takes milliseconds – roughly a million times slower than RAM.

Virtual memory: The illusion that each program has access to a large, contiguous block of memory, even though the physical RAM is much smaller. The OS achieves this by dividing memory into fixed-size blocks called pages.

Paging: The process of moving pages between RAM and disk. When a program accesses a page that is not in RAM, the OS triggers a page fault – it pauses the program, loads the requested page from disk into RAM (possibly evicting another page to make room), and then resumes the program. The program never notices the swap.

Page replacement policy: When RAM is full and a new page must be loaded, the OS must choose which existing page to evict. Common policies include LRU (least recently used), which evicts the page that has not been accessed for the longest time, and FIFO (first in, first out), which evicts the oldest page.

The mapping from OS concepts to MemGPT concepts is direct:

| Operating System | MemGPT |

|---|---|

| CPU | LLM processor (inference engine) |

| RAM (main memory) | Context window (prompt tokens) |

| Disk storage | External databases (archival + recall storage) |

| Virtual memory | Virtual context (illusion of unlimited context) |

| Page fault | LLM function call to retrieve external data |

| Page replacement policy | Queue manager eviction policy |

| System calls | MemGPT function calls |

The critical difference: in a traditional OS, the hardware and OS kernel manage paging automatically – programs do not decide when to page data in or out. In MemGPT, the LLM itself acts as both the program and the memory manager. It decides when to save information to external storage, when to search for old data, and what to keep in its limited context. This is what makes MemGPT’s retrieval “self-directed” rather than externally managed.

Let’s trace a concrete virtual memory scenario, then map it to MemGPT.

OS scenario: A computer has 4 pages of RAM and 3 programs (A, B, C) each needing 2 pages. RAM can hold 4 pages total. Programs A and B are loaded first:

RAM: [A1] [A2] [B1] [B2] (4/4 pages used)

Disk: [C1] [C2]Now program C needs to run. The OS must evict 2 pages to make room. Using FIFO, it evicts the two oldest pages (A1 and A2):

Step 1: Save A1, A2 to disk

Step 2: Load C1, C2 into freed RAM slots

RAM: [C1] [C2] [B1] [B2]

Disk: [A1] [A2]If program A is needed again, the OS triggers a page fault and swaps it back in, evicting something else.

MemGPT equivalent: A chatbot has a context window of 8,000 tokens (4 “pages” worth). It is conversing with a user and the context contains: system instructions (2,000 tokens), working context (1,000 tokens), and conversation messages (5,000 tokens, filling the remaining space).

Context: [System Instructions] [Working Context] [Messages 1-50]

External: (empty)The user sends a new message, but the context is full. The queue manager triggers eviction – it removes the oldest 25 messages, summarizes them, and stores them in recall storage:

Context: [System Instructions] [Working Context] [Summary of 1-25] [Messages 26-51]

External recall storage: [Messages 1-25 verbatim]Later, the user asks “What did we discuss at the start?” The LLM

recognizes it needs old information and calls

recall_storage.search("beginning of conversation"), which

brings relevant old messages back into context – the MemGPT equivalent

of a page fault.

Recall: In the OS-to-MemGPT mapping, what plays the role of RAM? What plays the role of disk? What plays the role of a system call?

Apply: A MemGPT agent has a context window of 16,000 tokens. System instructions use 3,000 tokens, and working context uses 1,000 tokens. The FIFO queue holds messages averaging 60 tokens each. (a) How many messages can the FIFO queue hold at capacity? (b) If the warning threshold is 70% of the context window, at how many messages does the memory pressure warning fire? (c) If a flush evicts 50% of queue capacity, how many messages are evicted?

Extend: Traditional OS page replacement uses policies like LRU (least recently used) – evict the page that has not been accessed for the longest time. MemGPT uses FIFO (first in, first out) instead. Why might FIFO be a better choice for conversation history than LRU? Think about the temporal structure of dialogue versus random memory access patterns in programs.

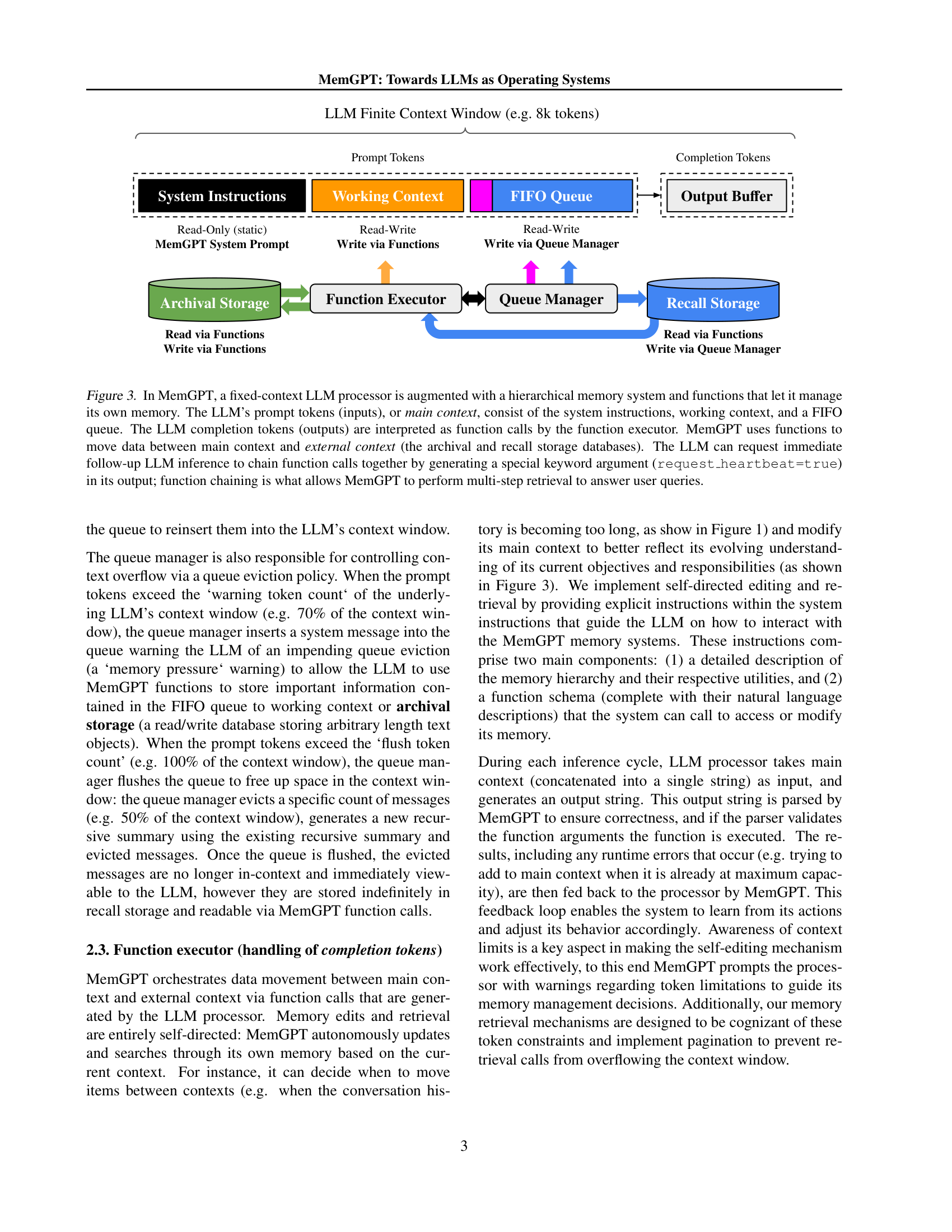

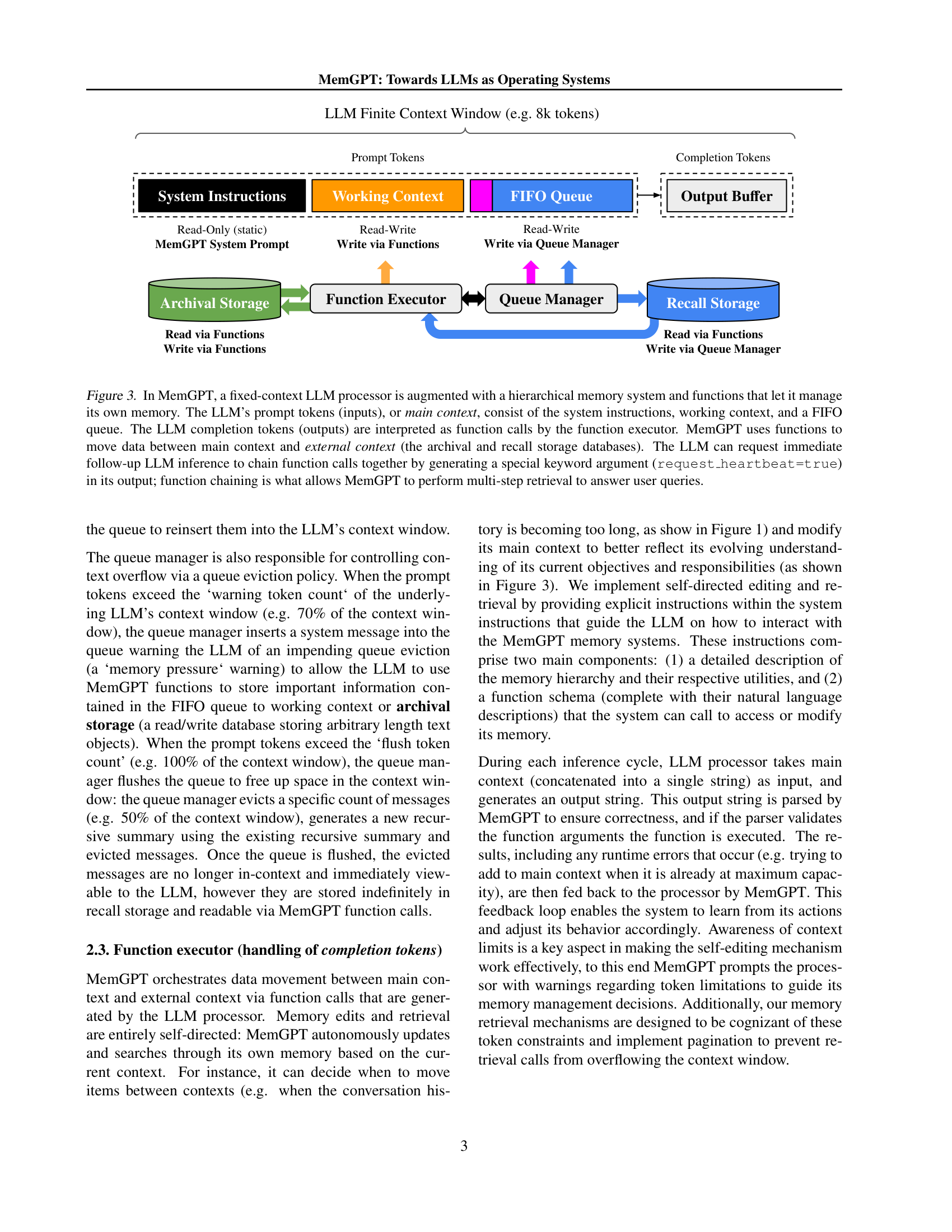

Now that you understand both the context window problem and the virtual memory solution, we can examine how MemGPT structures its memory. MemGPT divides all accessible information into two tiers: main context (fast, small, directly visible to the model) and external context (slow, large, requires explicit retrieval). This lesson covers what lives in each tier and why the boundary exists where it does.

Think about how you organize information at a physical desk. On the desk itself (within arm’s reach) you keep: your current task instructions, a small notepad of important facts, and the last few pages of notes from your current meeting. In the filing cabinet across the room, you keep: a complete archive of every meeting you have ever attended, plus a searchable database of reference documents. You can glance at anything on your desk instantly, but retrieving something from the cabinet requires getting up, walking over, searching, and bringing it back.

MemGPT’s main context is the desk. It consists of three contiguous sections within the LLM’s prompt tokens:

1. System instructions (read-only, static): The rules of how MemGPT works – descriptions of the memory hierarchy, instructions for when and how to use functions, and the agent’s persona. This section never changes during a conversation. Think of it as the employee handbook pinned to your cubicle wall.

2. Working context (read/write via functions, fixed

size): A scratchpad where the LLM stores distilled facts – user

preferences, key biographical details, important notes. The LLM cannot

modify this section by simply generating text; it must call explicit

functions like working_context.append() or

working_context.replace(). This constraint forces

deliberate memory management. Think of it as the sticky notes on your

monitor that you update by hand.

3. FIFO queue (read/write via queue manager): The rolling conversation history – user messages, the LLM’s responses, system alerts, and function call results. New messages are appended to the end; old messages are evicted from the front when space runs out. The very first entry in the queue always holds a recursive summary of everything that has been evicted, so the model retains a compressed sense of the full history. Think of it as the pages of meeting notes on your desk, where you periodically file old pages and keep a one-paragraph summary.

MemGPT’s external context is the filing cabinet. It consists of two databases:

Archival storage (read/write, vector-searchable): A database where the LLM can insert arbitrary text objects and later retrieve them by semantic similarity. It uses cosine similarity search over embedding vectors:

\[\text{sim}(q, d) = \frac{q \cdot d}{\|q\| \, \|d\|}\]

where:

This is the same similarity metric used in standard RAG pipelines (see Retrieval-Augmented Generation), but in MemGPT the LLM triggers the search itself rather than an external pipeline doing it.

Recall storage (read-only for the LLM, written by queue manager): A complete log of every message ever exchanged. When messages are evicted from the FIFO queue, the queue manager stores them here verbatim. The LLM can search recall storage by keyword, date, or content to bring old messages back into the FIFO queue. Think of it as a timestamped transcript of every conversation you have ever had.

Let’s trace how information flows through MemGPT’s memory tiers during a multi-session chat. The model is a personal assistant with a context window of 8,000 tokens.

Session 1: The user mentions their birthday is February 7 and their boyfriend’s name is James.

Main Context (8,000 tokens):

System Instructions: [MemGPT rules, persona] ~2,000 tokens

Working Context: (empty) ~0 tokens

FIFO Queue: [user: "fun my bf james baked me ~200 tokens

a birthday cake"]

External Context:

Archival Storage: (empty)

Recall Storage: (empty)The LLM recognizes these are important facts and calls:

working_context.append("Boyfriend named James")working_context.append("Birthday is February 7")Main Context:

Working Context: "Boyfriend named James. ~20 tokens

Birthday is February 7."

FIFO Queue: [user msg, function calls, results] ~400 tokensSession 3 (weeks later): The user mentions “actually james and i broke up.”

The LLM reads its working context, sees “Boyfriend named James”, and calls:

working_context.replace("Boyfriend named James", "Ex-boyfriend named James")The working context now reflects the updated relationship status, and this correction persists across all future sessions.

Session 5: The user mentions they went to Six Flags. Memory pressure is approaching. The LLM wants to recall previous mentions of Six Flags to craft an engaging response, so it calls:

recall_storage.search("six flags")The results come back:

Showing 3 of 3 results (page 1/1):

[01/24/2024] "lol yeah six flags"

[01/14/2024] "i love six flags been like 100 times"

[10/12/2023] "james and I actually first met at six flags"The LLM uses this to craft a personalized response: “Did you go with James? It’s so cute how you both met there!” – demonstrating long-term memory across sessions.

Recall: Name the three sections of MemGPT’s main context and describe the access pattern (read-only, read/write, etc.) of each.

Apply: An agent has the following working context:

"User name: Alice. Favorite color: blue. Job: software engineer."

The user says “I just switched careers – I’m a data scientist now.”

Write the exact function call the LLM should make to update working

context. Why is replace() more appropriate than

append() here?

Extend: MemGPT uses two separate external storage systems (archival and recall) rather than one unified database. What are the advantages of this separation? Consider the different types of queries each storage serves (semantic similarity vs. temporal/keyword search) and how a single unified store might struggle with both.

When the conversation grows too long, something has to give. The queue manager is MemGPT’s equivalent of the OS kernel’s page replacement system – it monitors context usage, warns the LLM when space is running low, and forcefully evicts old messages when space runs out. This lesson covers the eviction policy, recursive summarization, and the feedback loop between the queue manager and the LLM.

Imagine you are taking notes in a small notebook during an all-day meeting. The notebook only has 20 pages. When you reach page 14 (70% full), you start getting nervous – you highlight the most important points on the current pages and write a quick summary on a sticky note. When you hit page 20, you tear out the first 10 pages, put them in a folder (they are saved, not lost), update your sticky note with a summary of everything removed, and keep writing on the freed pages.

The queue manager does exactly this with two thresholds:

Warning threshold (typically 70% of context window):

When total prompt tokens reach this level, the queue manager injects a

system message into the FIFO queue: “Warning: your context is 70% full.

Consider saving important information to working context or archival

storage.” This gives the LLM a chance to proactively save critical data

before it is evicted. The LLM might respond by calling

working_context.append() to save key facts or

archival_storage.insert() to save lengthy content.

Flush threshold (typically 100% of context window): When tokens hit this limit, the queue manager performs a forced eviction. It removes approximately 50% of the FIFO queue messages (the oldest ones), generates a new recursive summary from the old summary plus the evicted messages, and writes the evicted messages to recall storage.

The recursive summarization is critical. After a flush, the first entry in the FIFO queue is always a summary of everything that has been evicted across all previous flushes. Each flush extends this summary rather than replacing it:

Before first flush:

FIFO[0] = "No prior summary."

FIFO[1..50] = [messages 1 through 50]

After first flush (evicts messages 1-25):

FIFO[0] = "Summary: User discussed their job as a teacher,

mentioned they have two cats named Pepper and Salt,

and asked about vacation destinations."

FIFO[1..25] = [messages 26 through 50]

After second flush (evicts messages 26-38):

FIFO[0] = "Summary: User is a teacher with two cats (Pepper

and Salt). Discussed vacation destinations,

settled on Japan. Talked about learning Japanese

and finding a language tutor."

FIFO[1..12] = [messages 39 through 50]Each recursive summary is generated by a separate LLM call that takes the previous summary plus the newly evicted messages as input and produces a condensed version. This means the summary grows in scope (covering more history) but not necessarily in length – older details get compressed further with each pass.

The cost of this scheme: recursive summarization is lossy. Details are inevitably lost during compression. The paper’s results show that MemGPT’s ability to search recall storage for verbatim old messages dramatically outperforms relying on summaries alone – this is exactly why recall storage exists as a separate system.

Let’s trace a complete eviction cycle with concrete token counts.

Setup: Context window \(C = 8{,}192\) tokens. System instructions = 2,000 tokens. Working context = 500 tokens. Warning threshold = \(0.7 \times 8192 = 5{,}734\) tokens. Flush threshold = \(8{,}192\) tokens. Average message = 50 tokens.

Available FIFO capacity: \(8192 - 2000 - 500 = 5{,}692\) tokens, or about 113 messages.

Step 1: The conversation reaches message 95. Total tokens: \(2000 + 500 + (95 \times 50) = 7{,}250\) tokens. This exceeds the warning threshold of 5,734.

The queue manager injects:

"[System] Memory pressure warning: context is 88% full."

Step 2: The LLM reads the warning and decides to save critical facts. It calls:

working_context.append("User is traveling to Tokyo in March")archival_storage.insert("User's detailed itinerary: Day 1: Shibuya...")Working context grows to 550 tokens. Some important details are now safely stored.

Step 3: The conversation continues to message 112. Total tokens: \(2000 + 550 + (112 \times 50) = 8{,}150\) tokens. Close to the flush threshold.

Step 4: Message 113 arrives. Total tokens would be \(8{,}150 + 50 = 8{,}200 > 8{,}192\). The flush threshold is hit.

Step 5: The queue manager evicts the oldest ~56 messages (50% of queue). It calls a separate LLM to generate a recursive summary from the old summary + messages 1-56. The evicted messages are stored verbatim in recall storage.

After flush:

System Instructions: 2,000 tokens

Working Context: 550 tokens

FIFO[0] (summary): ~200 tokens (new recursive summary)

FIFO[1..57]: 2,850 tokens (messages 57-113)

Total: 5,600 tokens (68% -- below warning threshold)The agent can now continue the conversation with room to spare, and

it can retrieve any of the evicted messages via

recall_storage.search().

Recall: What are the two threshold percentages used by MemGPT’s queue manager, and what happens at each one?

Apply: A MemGPT agent has a 16,000-token context window. System instructions use 3,000 tokens, working context uses 1,000 tokens, and each message averages 40 tokens. (a) How many messages fit in the FIFO queue at full capacity? (b) At what message count does the 70% warning fire? (c) After a flush that evicts 50% of the queue, roughly how many messages remain?

Extend: Recursive summarization compresses history, but each compression loses detail. After 10 flushes, the summary covers the entire conversation but may have lost critical specifics. Propose a modification to the eviction policy that reduces information loss. Consider: what if the LLM could choose which messages to evict (like LRU) rather than always evicting the oldest (FIFO)?

In standard RAG (see Retrieval-Augmented Generation), an external pipeline fetches documents and stuffs them into the prompt before the model runs. The model has no say in what gets retrieved or when. MemGPT inverts this: the LLM itself decides when to retrieve, what queries to make, and how many pages of results to examine. This self-directed retrieval is what separates MemGPT from prior approaches and is enabled by function calling – the ability of an LLM to generate structured outputs that invoke external tools.

Think about the difference between a student who is handed a pre-selected reading list (standard RAG) and a student who goes to the library, searches the catalog, checks out books, skims them, and decides whether to read more deeply or search for something different (MemGPT). The second student can adapt their research strategy based on what they find. If the first search returns irrelevant results, they try a different query. If one book references another, they go find that one too.

MemGPT provides the LLM with a set of functions it can call, similar to system calls in an operating system:

| Function | Purpose |

|---|---|

send_message(text) |

Send a response to the user |

working_context.append(text) |

Add a fact to the working context scratchpad |

working_context.replace(old, new) |

Update an existing fact in working context |

archival_storage.insert(text) |

Store text in the vector-searchable archive |

archival_storage.search(query, page) |

Search the archive by semantic similarity |

recall_storage.search(query) |

Search the message history log |

During each inference cycle, the LLM generates text that the function

executor parses as a function call. The function is executed, its result

is appended to the FIFO queue, and – here is the key mechanism – the LLM

can request an immediate follow-up inference by including

request_heartbeat=true in the function call.

Function chaining via the heartbeat flag is what enables multi-step operations. Without it, the LLM would execute one function and then wait for the next user message. With it, the LLM can chain together a sequence like:

archival_storage.search("Nobel Prize physics") with

request_heartbeat=truearchival_storage.search("Nobel Prize physics", page=2)

with request_heartbeat=truesend_message("Wilhelm Conrad Rontgen won the first Nobel Prize in Physics in 1901.")All five steps happen within a single user turn. The user asked one question and received one answer, but behind the scenes the LLM executed three function calls across three inference passes.

This is conceptually similar to the ReAct framework (see ReAct), which interleaves reasoning with actions in a thought-action-observation loop. MemGPT’s heartbeat mechanism serves the same purpose – it lets the model reason about intermediate results and decide what to do next – but specialized for memory management rather than general task execution.

The pagination design deserves attention. When

archival_storage.search() returns results, it shows a fixed

number per page (e.g., 10) and reports the total count and current page

number: “Showing 10 of 124 results (page 1/13).” This prevents a single

retrieval from flooding the context window. The LLM can page through

results incrementally, and at each step it can decide: “I found what I

need” (stop paging) or “I need to look further” (request next page).

Let’s trace a complete function chaining sequence for a document QA task. The user has uploaded 124 Wikipedia articles to archival storage and asks: “Who won the first Nobel Prize in physics?”

User message enters FIFO queue:

"Who won the first Nobel Prize in physics?"

Inference pass 1: The LLM reads the question and decides to search archival storage.

LLM output: archival_storage.search("nobel physics", request_heartbeat=true)Function executor runs the search. Results appended to FIFO:

"Showing 10 of 124 results (page 1/13):

'The Nobel Prizes, beginning in 1901, and the...'

'This award is administered by the Nobel Foundation...'

..."Because request_heartbeat=true, the queue manager

immediately triggers another inference.

Inference pass 2: The LLM reads the results. The answer is not clearly stated on page 1. It decides to check page 2.

LLM output: archival_storage.search("nobel physics", page=2, request_heartbeat=true)Results:

"Showing 10 of 124 results (page 2/13):

'The Nobel Prize in Physics is a yearly award given...'

'The 1901 Nobel in physics was awarded to Wilhelm...'

..."Inference pass 3: The LLM finds the answer in the page 2 results.

LLM output: send_message("Wilhelm Conrad Rontgen won the first Nobel Prize

in Physics in 1901.")No heartbeat requested. The system yields and waits for the next user message.

Total cost: 3 LLM inference passes for one user question. This is the latency tradeoff – MemGPT trades speed for the ability to search beyond the context window.

Recall: What does the

request_heartbeat=true flag do, and why is it essential for

multi-step retrieval?

Apply: A user asks a MemGPT agent: “What’s the name of the restaurant I mentioned during our conversation last Tuesday?” Trace the function calls the LLM would likely make. Consider: should it search archival storage or recall storage? Why?

Extend: MemGPT with GPT-3.5 performs substantially worse than with GPT-4 across all tasks because GPT-3.5 struggles with function calling. What specific failure modes might a weaker model exhibit when trying to chain function calls? Consider: incorrect function names, wrong arguments, failing to request heartbeats, or stopping the search too early.

This lesson brings together all the pieces – context window constraints, the OS analogy, the two-tier memory hierarchy, the queue manager, and function chaining – into the complete MemGPT system. The paper’s key contribution is not any single component but the way they fit together: an event-driven architecture where the LLM serves as both processor and memory manager, creating the illusion of unlimited context from a fixed-size window.

Think about how a full operating system works. The OS does not do just one thing. It coordinates: the CPU runs programs, the memory manager pages data between RAM and disk, the interrupt handler responds to events (keyboard input, timer ticks, disk operations completing), and system calls let programs request OS services. No single component is revolutionary – the innovation is how they work together as a coherent system.

MemGPT works the same way. The complete control flow is event-driven:

Events trigger LLM inference. An event can be: a user message, a system alert (like a memory pressure warning), a user interaction (a document upload completing), or a timed event (a scheduled wake-up that lets MemGPT run autonomously without user input). Each event is converted to a plain text message and appended to the FIFO queue.

The inference cycle processes each event:

request_heartbeat=true, go to step 2 (triggering

another inference cycle). Otherwise, yield and wait for the next

event.The LLM’s output is always a function call – it

never generates raw text that goes directly to the user. Even sending a

message to the user requires calling send_message(). This

design ensures that every LLM action passes through the function

executor, which validates arguments, handles errors, and manages the

feedback loop.

Error handling is part of the feedback loop. If the LLM tries to append to working context when it is already at maximum capacity, the function executor returns an error message like: “Error: working context is full (500/500 tokens). Consider using working_context.replace() to update existing entries or archival_storage.insert() to offload data.” This error message goes into the FIFO queue, and on the next inference pass, the LLM can adjust its strategy.

What makes this different from standard RAG? In standard RAG, retrieval happens once, before the model runs. The pipeline fetches the top-\(K\) documents, stuffs them into the prompt, and the model generates a response. If the retrieved documents do not contain the answer, there is no second chance.

In MemGPT, retrieval is iterative and self-directed. The LLM can:

The paper demonstrates this advantage dramatically in the nested

key-value retrieval task. Given 140 UUID (universally unique identifier

– a 128-bit random ID like 5b8a3f2e-...) key-value pairs

where values may themselves be keys (requiring chained lookups),

baseline models hit 0% accuracy at 1-3 nesting levels because they

cannot perform multi-hop reasoning within a single context. MemGPT with

GPT-4 maintained near-perfect accuracy across all nesting levels (0

through 4) by executing sequential function calls to chain the lookups

together.

The experimental results across tasks:

| Task | Baseline (GPT-4 Turbo) | MemGPT (GPT-4 Turbo) | Improvement |

|---|---|---|---|

| Deep memory retrieval | 35.3% accuracy | 93.4% accuracy | +58.1 pp |

| Conversation opener | 0.857 SIM-1 | 0.857 SIM-1 | comparable |

| Nested KV (3 levels) | 0% accuracy | ~100% accuracy | total failure vs. success |

Let’s trace the complete MemGPT system through a multi-session chat scenario from end to end.

Setup: GPT-4 with \(C = 8{,}192\). System instructions = 2,000 tokens. Working context starts empty (0/500 tokens). Warning threshold = 5,734 tokens. Flush threshold = 8,192 tokens.

Session 1, Message 1: User says: “Hey! My name is Alice and my birthday is March 15.”

Queue manager appends message to FIFO (tokens: 2,000 + 0 + 20 = 2,020).

Below warning threshold. No alert.

LLM processes context, decides to save important facts:

working_context.append("User name: Alice. Birthday: March 15.")

request_heartbeat=trueFunction executor updates working context (+50 tokens). Result appended to FIFO.

Heartbeat triggers another inference.

LLM responds:

send_message("Nice to meet you, Alice! I'll remember that --

March 15, a fellow Pisces!")No heartbeat. Yield. Wait for next event.

Session 1, Messages 2-90: Conversation continues normally. Context fills up.

Session 1, Message 91: Total tokens hit 5,800, exceeding the warning threshold.

Queue manager injects:

"[System] Memory pressure: context is 71% full."

LLM reads the warning and proactively saves information:

archival_storage.insert("Alice discussed her trip to Japan last

summer, visiting temples in Kyoto and

eating ramen in Tokyo.")

request_heartbeat=trueThen continues conversation normally.

Session 1, Message 110: Total tokens hit 8,200, exceeding flush threshold.

Session 4 (weeks later): User asks: “Remember when I told you about that ramen place in Tokyo?”

LLM reads question. Working context has “User name: Alice. Birthday: March 15.” but no ramen details.

LLM searches recall storage:

recall_storage.search("ramen Tokyo")

request_heartbeat=trueResults come back with the original message from session 1.

LLM also searches archival storage:

archival_storage.search("ramen Tokyo")

request_heartbeat=trueResults include the detailed note saved during memory pressure.

LLM responds with specifics from the retrieved context.

Total: 4 inference passes for one user question. Latency is higher than a single-pass response, but the answer draws from information that a fixed-context model would have lost entirely.

Recall: In MemGPT’s control flow, what happens when

the LLM’s output does not include request_heartbeat=true?

What happens when it does?

Apply: Design the sequence of events and function calls for this scenario: a MemGPT document analysis agent has 500 Wikipedia articles in archival storage. The user asks “Which country has the most UNESCO World Heritage Sites?” The agent needs to search, find the relevant article, and respond. Write out each inference pass, the function called, and whether the heartbeat flag is set.

Extend: MemGPT has no formal memory management policy – the LLM decides what to save and evict based on natural language instructions in the system prompt. What are the risks of this approach? Consider: what happens if the LLM misjudges what is important? How might you add a learned eviction policy that improves over time? What training signal would you use?

MemGPT’s core analogy maps LLM context windows to RAM and external databases to disk. Where does this analogy break down? Consider: in a traditional OS, page faults are transparent to applications – they happen automatically. In MemGPT, the LLM must explicitly request retrieval. What are the consequences of this difference?

The paper shows that MemGPT with GPT-4 achieves 92.5% accuracy on the deep memory retrieval task, while the GPT-4 baseline achieves only 32.1%. Both models have the same underlying capabilities. What specifically about the MemGPT architecture enables this 60-percentage-point improvement? Why can’t the baseline achieve similar performance with a better prompt?

Standard RAG (see Retrieval-Augmented Generation) fetches documents once before the model runs. MemGPT lets the model fetch iteratively. When would standard RAG be sufficient, and when would you need MemGPT’s iterative approach? Give a concrete example of each case.

The paper does not report latency or token cost. A single user question might trigger 5-10 sequential LLM inference calls in MemGPT. In a production system, how would you balance the accuracy benefits of self-directed retrieval against the latency and cost multiplier? What optimizations might reduce the number of inference calls needed?

MemGPT’s recursive summarization is lossy – details are inevitably compressed away during each flush. The “lost in the middle” paper (see Lost in the Middle) showed that models struggle with information in the middle of long contexts. How do these two findings relate? Does MemGPT’s approach of keeping contexts short and focused address the “lost in the middle” problem, or does it create a different kind of information loss?

Build a simplified MemGPT memory manager that maintains a two-tier memory hierarchy with a FIFO queue, working context, archival storage, recursive summarization, and a queue manager with warning and flush thresholds – demonstrating the core data flow of the paper using numpy and plain Python only.

You will implement a MemoryManager class that simulates

MemGPT’s memory hierarchy without an actual LLM. Instead of LLM

inference, function calls are made explicitly by the test harness. The

system must:

import numpy as np

def token_count(text):

"""Estimate token count as word count (rough approximation)."""

return len(text.split())

def cosine_similarity(a, b):

"""

Compute cosine similarity between two vectors.

sim(a, b) = (a . b) / (||a|| * ||b||)

TODO: Implement using numpy. Handle the edge case where

either vector has zero norm (return 0.0).

"""

# TODO: implement

pass

class WorkingContext:

"""Fixed-size scratchpad for key facts. Writable only via explicit calls."""

def __init__(self, max_tokens=100):

self.max_tokens = max_tokens

self.entries = [] # list of strings

def token_usage(self):

return sum(token_count(e) for e in self.entries)

def append(self, text):

"""

Add a new entry. Return error string if it would exceed capacity.

TODO: Check if adding this text would exceed max_tokens.

If yes, return an error message (string).

If no, append the text and return a success message.

"""

# TODO: implement

pass

def replace(self, old_text, new_text):

"""

Replace an existing entry with new text.

TODO: Find the entry matching old_text exactly.

Replace it with new_text. Check that the replacement

does not exceed capacity. Return appropriate message.

"""

# TODO: implement

pass

def contents(self):

return " | ".join(self.entries) if self.entries else "(empty)"

class ArchivalStorage:

"""Vector-searchable external storage for arbitrary text."""

def __init__(self, embedding_dim=8):

self.documents = [] # list of strings

self.embeddings = [] # list of numpy arrays

self.embedding_dim = embedding_dim

self.rng = np.random.default_rng(42)

def _embed(self, text):

"""Create a deterministic pseudo-embedding from text.

(In real MemGPT, this calls an embedding model like ada-002.)

"""

seed = sum(ord(c) for c in text) % (2**31)

rng = np.random.default_rng(seed)

vec = rng.standard_normal(self.embedding_dim)

return vec / (np.linalg.norm(vec) + 1e-10)

def insert(self, text):

"""Store a text document with its embedding."""

self.documents.append(text)

self.embeddings.append(self._embed(text))

return f"Inserted into archival storage ({len(self.documents)} total docs)."

def search(self, query, page=1, page_size=3):

"""

Search archival storage by cosine similarity to query.

Return paginated results.

TODO:

1. Embed the query using self._embed(query)

2. Compute cosine similarity between query embedding

and every document embedding

3. Sort documents by similarity (highest first)

4. Return the requested page of results, formatted as:

"Showing {count} of {total} results (page {page}/{total_pages}):\n"

followed by each result on its own line with index.

"""

# TODO: implement

pass

class RecallStorage:

"""Complete log of all evicted messages, searchable by keyword."""

def __init__(self):

self.messages = [] # list of (timestamp, text) tuples

def store(self, messages, timestamp):

"""Store a batch of evicted messages."""

for msg in messages:

self.messages.append((timestamp, msg))

def search(self, query, max_results=5):

"""

Search recall storage by keyword matching.

TODO: Find messages containing the query string

(case-insensitive). Return up to max_results matches,

formatted with their timestamps.

"""

# TODO: implement

pass

class MemoryManager:

"""

The MemGPT queue manager: coordinates main context and external storage.

"""

def __init__(self, context_window=200, system_prompt_tokens=50,

working_context_max=100, warning_pct=0.70, flush_pct=1.0):

self.context_window = context_window

self.system_prompt_tokens = system_prompt_tokens

self.warning_pct = warning_pct

self.flush_pct = flush_pct

self.working_context = WorkingContext(max_tokens=working_context_max)

self.archival = ArchivalStorage()

self.recall = RecallStorage()

self.fifo_queue = ["No prior conversation summary."]

self.flush_count = 0

self.event_log = [] # log of system events for debugging

def total_tokens(self):

"""Calculate total token usage across all main context sections."""

fifo_tokens = sum(token_count(m) for m in self.fifo_queue)

wc_tokens = self.working_context.token_usage()

return self.system_prompt_tokens + wc_tokens + fifo_tokens

def warning_threshold(self):

return int(self.context_window * self.warning_pct)

def flush_threshold(self):

return int(self.context_window * self.flush_pct)

def _generate_summary(self, old_summary, evicted_messages):

"""

Simulate recursive summarization (real MemGPT uses an LLM call).

Concatenate old summary with evicted content, then truncate.

TODO: Create a new summary by combining the old summary with

a brief representation of the evicted messages. Since we don't

have an LLM, simulate summarization by taking the first 5 words

of each evicted message and prepending the old summary.

Keep total summary under 30 words.

"""

# TODO: implement

pass

def add_message(self, message, timestamp=0):

"""

Add a message to the FIFO queue and handle memory management.

TODO:

1. Append the message to self.fifo_queue

2. Check if total tokens exceed the warning threshold

-> if yes, log a memory pressure warning

3. Check if total tokens exceed the flush threshold

-> if yes, perform eviction:

a. Remove the oldest ~50% of messages (excluding index 0,

which is the summary)

b. Generate a new recursive summary

c. Store evicted messages in recall storage

d. Replace fifo_queue[0] with the new summary

4. Return a status dict with: total_tokens, warning_fired (bool),

flush_fired (bool)

"""

# TODO: implement

pass

def status(self):

"""Print current memory state."""

tokens = self.total_tokens()

pct = tokens / self.context_window * 100

return (

f"Tokens: {tokens}/{self.context_window} ({pct:.0f}%)\n"

f"FIFO messages: {len(self.fifo_queue)}\n"

f"Working context: {self.working_context.contents()}\n"

f"Archival docs: {len(self.archival.documents)}\n"

f"Recall messages: {len(self.recall.messages)}\n"

f"Flushes: {self.flush_count}"

)

def run_simulation():

"""Simulate a multi-turn MemGPT conversation."""

mgr = MemoryManager(

context_window=200,

system_prompt_tokens=50,

working_context_max=30,

warning_pct=0.70,

flush_pct=1.0,

)

print("=== MemGPT Memory Manager Simulation ===\n")

print(f"Context window: {mgr.context_window} tokens")

print(f"Warning threshold: {mgr.warning_threshold()} tokens (70%)")

print(f"Flush threshold: {mgr.flush_threshold()} tokens (100%)")

print()

# Phase 1: User introduces themselves

print("--- Phase 1: User introduction ---")

result = mgr.add_message("Hi my name is Alice and I love hiking in the mountains", timestamp=1)

print(f"Added message. {mgr.status()}\n")

# Agent saves facts to working context (simulating LLM function call)

wc_result = mgr.working_context.append("User: Alice. Hobby: hiking.")

print(f"Working context append: {wc_result}")

print(f"{mgr.status()}\n")

# Phase 2: Fill up context with conversation messages

print("--- Phase 2: Extended conversation ---")

topics = [

"I went to Yosemite last summer and hiked Half Dome it was amazing",

"The trail was steep but the views from the top were worth every step",

"I also visited Glacier Point and saw the sunset over the valley below",

"My favorite campsite was in the upper pines near the Merced River",

"We saw a black bear near our campsite it was scary but exciting",

"I want to visit Zion National Park next and hike Angels Landing trail",

"Have you ever heard of the Narrows hike at Zion through the river",

"I also love rock climbing especially at Joshua Tree National Park area",

"My friend Sarah and I are planning a trip to Patagonia next spring",

"We want to hike the W Trek in Torres del Paine for five days total",

"I need to get better boots though my current ones gave me blisters",

"Do you have any recommendations for waterproof hiking boots that last",

"I prefer lightweight boots that are good for scrambling on rocky terrain",

"Oh and I also need a new rain jacket mine is starting to delaminate",

]

for i, topic in enumerate(topics):

result = mgr.add_message(topic, timestamp=i + 2)

if result.get("warning_fired"):

print(f" [Message {i+2}] WARNING: Memory pressure! "

f"({mgr.total_tokens()}/{mgr.context_window} tokens)")

if result.get("flush_fired"):

print(f" [Message {i+2}] FLUSH: Evicted messages to recall storage. "

f"({mgr.total_tokens()}/{mgr.context_window} tokens)")

print(f"\n{mgr.status()}\n")

# Phase 3: Agent saves important info before pressure builds

print("--- Phase 3: Proactive memory management ---")

archival_result = mgr.archival.insert(

"Alice hiked Half Dome at Yosemite last summer and visited Glacier Point"

)

print(f"Archival insert: {archival_result}")

archival_result = mgr.archival.insert(

"Alice and Sarah are planning a Patagonia trip to hike the W Trek"

)

print(f"Archival insert: {archival_result}")

print()

# Phase 4: Demonstrate retrieval

print("--- Phase 4: Self-directed retrieval ---")

print("User asks: 'What was that national park I told you about?'\n")

# Search recall storage (keyword)

recall_results = mgr.recall.search("national park")

print(f"Recall search for 'national park':\n{recall_results}\n")

# Search archival storage (semantic similarity)

archival_results = mgr.archival.search("national park hiking trip")

print(f"Archival search for 'national park hiking trip':\n{archival_results}\n")

print("--- Final State ---")

print(mgr.status())

if __name__ == "__main__":

run_simulation()=== MemGPT Memory Manager Simulation ===

Context window: 200 tokens

Warning threshold: 140 tokens (70%)

Flush threshold: 200 tokens (100%)

--- Phase 1: User introduction ---

Added message. Tokens: 65/200 (32%)

FIFO messages: 2

Working context: (empty)

Archival docs: 0

Recall messages: 0

Flushes: 0

Working context append: Appended to working context (5/30 tokens used).

Tokens: 70/200 (35%)

FIFO messages: 2

Working context: User: Alice. Hobby: hiking.

Archival docs: 0

Recall messages: 0

Flushes: 0

--- Phase 2: Extended conversation ---

[Message 9] WARNING: Memory pressure! (145/200 tokens)

[Message 12] FLUSH: Evicted messages to recall storage. (112/200 tokens)

Tokens: 166/200 (83%)

FIFO messages: 8

Working context: User: Alice. Hobby: hiking.

Archival docs: 0

Recall messages: 7

Flushes: 1

--- Phase 3: Proactive memory management ---

Archival insert: Inserted into archival storage (1 total docs).

Archival insert: Inserted into archival storage (2 total docs).

--- Phase 4: Self-directed retrieval ---

User asks: 'What was that national park I told you about?'

Recall search for 'national park':

[t=7] I want to visit Zion National Park next and hike Angels Landing trail

[t=9] I also love rock climbing especially at Joshua Tree National Park area

Archival search for 'national park hiking trip':

Showing 2 of 2 results (page 1/1):

[1] Alice hiked Half Dome at Yosemite last summer and visited Glacier Point

[2] Alice and Sarah are planning a Patagonia trip to hike the W Trek

--- Final State ---

Tokens: 166/200 (83%)

FIFO messages: 8

Working context: User: Alice. Hobby: hiking.

Archival docs: 2

Recall messages: 7

Flushes: 1The exact token counts will vary with the token estimation heuristic, but the pattern should be clear: messages fill up context, a warning fires around 70%, a flush evicts old messages and stores them in recall storage, and both recall search (keyword) and archival search (cosine similarity) can retrieve information that is no longer in the main context.