By the end of this course, you will be able to:

Before you can understand ReAct, you need to understand how researchers think about a system that takes actions in the world. ReAct frames language models as agents – decision-makers that observe their surroundings and choose what to do next. This framing is what makes the paper’s contribution possible.

Think about a librarian helping a patron find information. The patron asks a multi-part question: “Was the director of Inception born before or after the lead actor?” The librarian does not answer from memory alone. She walks to the catalog, looks up Inception, finds the director (Christopher Nolan), walks to a different section to find his birth year (1970), then repeats the process for the lead actor (Leonardo DiCaprio, born 1974), and finally compares the two dates. At each step, what she does next depends on everything she has seen and done so far.

Formally, this librarian is an agent interacting with an environment (the library). At each time step \(t\), the agent receives an observation \(o_t\) from the environment (what she sees on the shelf) and takes an action \(a_t\) (walking to a section, opening a book, speaking an answer). The agent selects actions according to a policy:

\[\pi(a_t \mid c_t), \quad \text{where } c_t = (o_1, a_1, \cdots, o_{t-1}, a_{t-1}, o_t)\]

What this means: at each step, the agent looks at everything that has happened so far (all prior observations and actions) and picks what to do next. For a language model acting as an agent, \(\pi\) is the language model itself – it reads the concatenated text of all prior observations and actions and generates the next action as text.

Why this matters: for simple tasks, the mapping from context \(c_t\) to action \(a_t\) is straightforward. If the observation says “The director is Christopher Nolan,” the next action is obvious: look up Christopher Nolan’s birth year. But for complex tasks requiring multiple pieces of information from different sources, the context grows long and the mapping becomes highly implicit. The agent must somehow figure out what to do next from a wall of accumulated text. This is the bottleneck that ReAct addresses.

Let’s trace through a concrete agent-environment interaction for the question: “Is the Eiffel Tower taller than the Statue of Liberty?”

The action space is: search[entity],

lookup[string], finish[answer].

| Step \(t\) | Context \(c_t\) (abbreviated) | Action \(a_t\) |

|---|---|---|

| 1 | Question: “Is the Eiffel Tower taller than the Statue of Liberty?” | search[Eiffel Tower] |

| 2 | …+ Obs 1: “The Eiffel Tower is a wrought-iron lattice tower… 330 metres tall…” | search[Statue of Liberty] |

| 3 | …+ Obs 2: “The Statue of Liberty is a colossal neoclassical sculpture… stands 93 metres tall…” | finish[yes] |

At step 3, the context \(c_3\) contains the question, both search results, and both actions. The agent must extract 330m and 93m from the observations, compare them, and produce the correct answer. Notice that no reasoning is visible – the agent jumps directly from observations to the final action.

Now imagine the question is more complex: “Was the architect of the Eiffel Tower born in the same country as the sculptor of the Statue of Liberty?” The agent would need to: search Eiffel Tower, extract the architect (Gustave Eiffel), search Gustave Eiffel, extract his birth country (France), search Statue of Liberty, extract the sculptor (Frederic Auguste Bartholdi), search Bartholdi, extract his birth country (France), then compare. By step 7, the context contains 4 observations and 6 prior actions. Figuring out the correct final action from all that accumulated text – without any explicit reasoning – is the challenge.

Recall: What are the three components of the context \(c_t\) at any time step? Why does the context grow longer as the interaction proceeds?

Apply: For the question “Did Albert Einstein win

more Nobel Prizes than Marie Curie?”, write out the sequence of actions

an agent would need to take using the action space

{search[entity], lookup[string],

finish[answer]}. List each step with its action and what

you expect the observation to contain.

Extend: The policy \(\pi(a_t \mid c_t)\) must map an increasingly long context to a single action. What practical problem does this create for a language model with a fixed context window (say, 8,192 tokens)? How might long trajectories with many observations exceed this limit?

Before ReAct, researchers had discovered a powerful technique for improving language model reasoning: chain-of-thought (CoT) prompting. This lesson reviews how CoT works and – critically – identifies the failure mode that motivated ReAct.

Recall from the CoT course (see Chain-of-Thought Prompting Elicits Reasoning in Large Language Models) that chain-of-thought prompting includes step-by-step reasoning in few-shot exemplars. Instead of showing the model “Q: … A: 42”, you show “Q: … A: First, … Then, … Therefore, 42.” The model learns to produce its own reasoning steps before the answer, and each intermediate step becomes part of the context for generating the next step.

This works brilliantly for problems where the model already “knows” the relevant facts from pretraining. If you ask “Roger has 5 tennis balls. He buys 2 cans of 3 each. How many does he have?”, the model can reason through the arithmetic step by step using only its internal knowledge.

But what happens when the model doesn’t know the facts? Consider this question: “Was the first president of Croatia born before or after the first president of Slovenia?”

A CoT-prompted model might generate:

Thought: The first president of Croatia was Franjo Tudjman.

He was born in 1922. The first president of Slovenia was

Milan Kucan. He was born in 1941. 1922 is before 1941,

so the answer is yes, the first president of Croatia was

born before the first president of Slovenia.This looks convincing. The reasoning structure is impeccable: identify both presidents, find their birth years, compare. But Milan Kucan was actually born in 1941, and Franjo Tudjman in 1922 – so the answer happens to be correct here. For many other questions, the model confidently invents plausible-sounding but wrong facts. This is hallucination: the model generates fluent reasoning that is internally consistent but factually incorrect.

The ReAct paper quantified this problem precisely. On HotpotQA (a multi-hop question answering benchmark), the authors analyzed 50 trajectories where CoT produced incorrect answers and found that hallucination caused 56% of CoT’s failures. More than half the time, the model got the answer wrong not because it reasoned badly, but because it fabricated facts.

The core issue is that CoT reasoning is a closed system. The model reasons entirely from its internal parameters – its “memory” of the training data. It has no way to check a fact, look something up, or verify an assumption. When it encounters a gap in its knowledge, it fills the gap with a plausible-sounding invention, and each subsequent reasoning step builds on this fabrication, compounding the error.

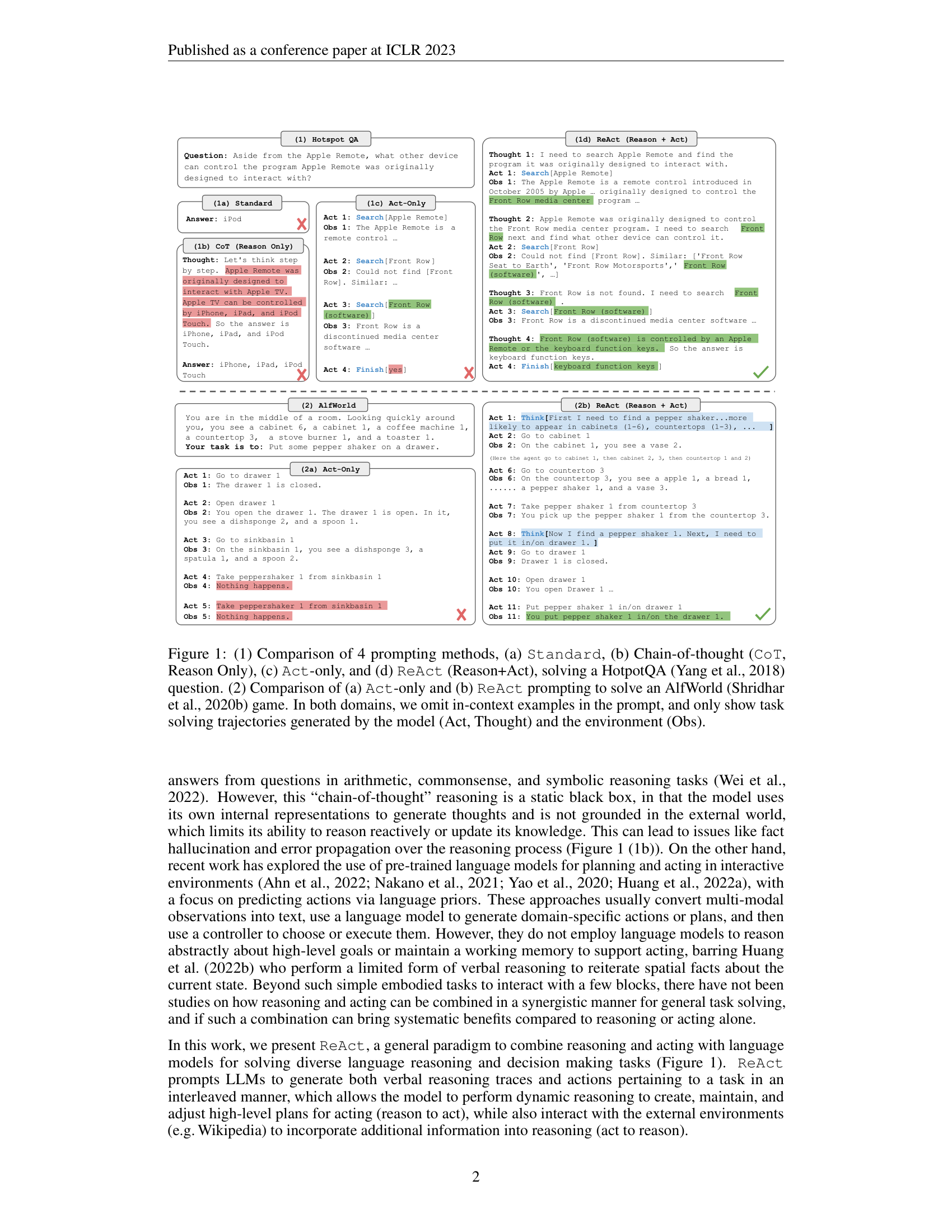

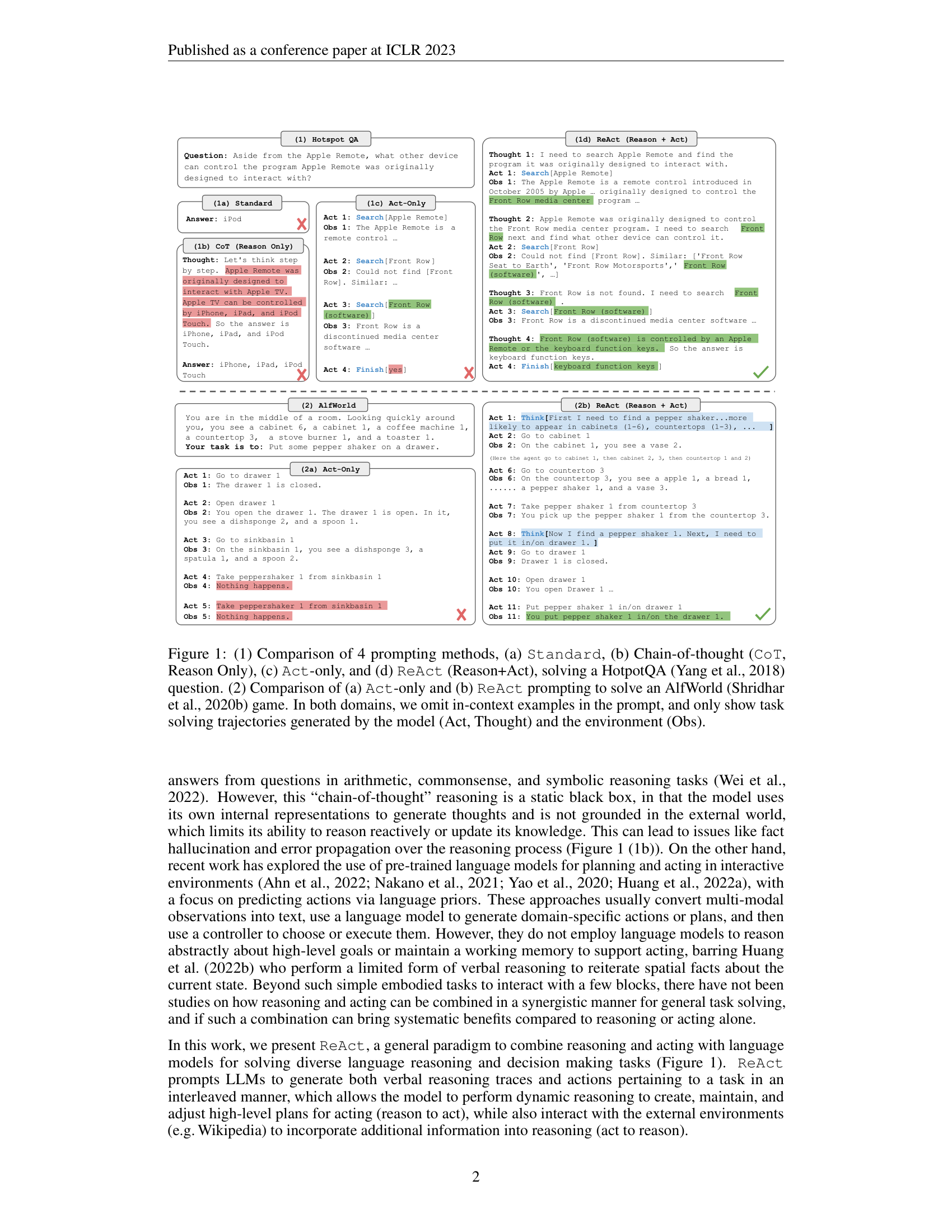

Let’s trace a CoT failure on a concrete HotpotQA question:

Question: “Aside from the Apple Remote, what other device can control the program Apple Remote was originally designed to interact with?”

CoT trajectory (reasoning-only, no actions):

The Apple Remote was originally designed to interact with

the iTunes media player. Other devices that can control

iTunes include the iPhone, iPad, and iPod Touch using the

Remote app. So the answer is iPhone, iPad, and iPod Touch.This is wrong. The Apple Remote was originally designed to control Front Row, not iTunes. The correct answer is “keyboard function keys.” The model hallucinated the connection to iTunes (plausible but incorrect) and then built a reasonable-sounding but wrong answer on top of that fabrication.

Key observation: the structure of the reasoning is fine (identify the program, find other controllers). The problem is the content – the model’s internal knowledge about Apple Remote’s original purpose is wrong, and there is no mechanism to check or correct it.

CoT success/failure breakdown from the paper (200 HotpotQA trajectories):

| Failure Mode | CoT | ReAct |

|---|---|---|

| Hallucination | 56% | 0% |

| Reasoning error | 44% | 29% |

| Search result errors | 0% | 23% |

| Other | 0% | 48% |

The contrast is striking: CoT hallucinates in the majority of its failures, while ReAct – because it retrieves real information – never hallucinates. ReAct trades hallucination errors for retrieval errors (23%), a very different failure mode.

Recall: What percentage of CoT’s failures on HotpotQA were caused by hallucination? Why can’t a reasoning-only approach detect its own hallucinations?

Apply: Consider the claim: “The Mona Lisa is displayed in the British Museum.” A CoT-prompted model might reason: “The Mona Lisa is a famous painting by Leonardo da Vinci. It is displayed in a major European museum. The British Museum is a major European museum in London. So the claim is SUPPORTS.” Identify the hallucination in this reasoning and explain how access to an external knowledge source would fix it.

Extend: The paper shows that hallucination rates are different for HotpotQA (multi-hop QA) and FEVER (fact verification). On FEVER, ReAct outperforms CoT by a larger margin (60.9 vs. 56.3). Why might fact verification be more vulnerable to hallucination than question answering? Think about what happens when the model needs to verify a claim that is false.

CoT fails by reasoning without grounding. But what about the opposite approach: acting without reasoning? Before ReAct, some systems used language models to generate sequences of actions (search queries, button clicks) without any explicit reasoning. This lesson examines why that also fails.

Imagine you’re playing a scavenger hunt in an unfamiliar house. Someone tells you: “Find a pepper shaker and put it in a drawer.” Without thinking, you might walk to the kitchen, open the first cabinet, see no pepper shaker, open the next one, see no pepper shaker, walk to the dining room, and so on – randomly checking locations with no strategy.

A smarter approach: pause and think. “Pepper shakers are usually on countertops, near the stove, or in spice cabinets. Let me check those first.” You apply commonsense knowledge to narrow down the search before acting.

The “Act-only” baseline in the ReAct paper does the former. It

generates actions like search[entity] and

finish[answer] without any explicit reasoning steps. The

model sees the question, the action history, and the observations, and

must directly produce the next action.

This fails in two specific ways:

Failure 1: Inability to synthesize. On HotpotQA, the

Act-only agent successfully retrieved relevant Wikipedia pages but then

failed to combine the facts into a correct answer. Consider the Apple

Remote example from Lesson 2. An Act-only agent might successfully

search “Apple Remote” and then search “Front Row (software),” but when

it comes time to issue finish[answer], it has to extract

the answer from two observations without explicitly reasoning about

what it found. The paper’s Figure 1 shows this exact failure: the

Act-only agent retrieves the right information but finishes with the

wrong answer.

Failure 2: Inability to plan or recover. On ALFWorld (a text-based household simulation), the Act-only agent often lost track of its progress. Without thoughts like “I’ve checked cabinets 1-3, now I should check the countertop,” the agent revisited locations it had already searched or wandered aimlessly. When something unexpected happened (the pepper shaker was not where expected), the agent had no mechanism for reasoning about what to try next.

The paper quantified this dramatically. On ALFWorld, the best Act-only trial achieved 45% success. The best ReAct trial achieved 71% – nearly double. Even ReAct’s worst trial (48%) beat Act-only’s best trial (45%).

Let’s compare Act-only and ReAct on an ALFWorld task: “Put a pepper shaker in a drawer.”

Act-only trajectory:

Act 1: go to drawer 1

Obs 1: The drawer 1 is closed.

Act 2: open drawer 1

Obs 2: You open drawer 1. It is empty.

Act 3: go to sinkbasin 1

Obs 3: On sinkbasin 1, you see a dishsponge 2, and a spoon 1.

Act 4: take peppershaker 1 from sinkbasin 1

Obs 4: Nothing happens.

Act 5: take peppershaker 1 from sinkbasin 1

Obs 5: Nothing happens.The agent goes to a drawer first (wrong – it needs to find the pepper shaker first, then put it in a drawer). When the sinkbasin does not have a pepper shaker, the agent tries to take one anyway, then repeats the same failed action. Without reasoning, it cannot diagnose the problem or adjust its plan.

ReAct trajectory:

Act 1: Think[First I need to find a pepper shaker. It is

more likely to appear in cabinets (1-6), countertops

(1-3), or on a shelf (1-3). I'll check countertops

first.]

Act 2: go to countertop 1

Obs 2: On countertop 1, you see a bread 1, a peppershaker 1,

and a vase 3.

Act 3: take peppershaker 1 from countertop 1

Obs 3: You pick up the peppershaker 1 from the countertop 1.

Act 4: Think[Now I find a pepper shaker 1. Next, I need to

put it in a drawer. I'll go to drawer 1.]

Act 5: go to drawer 1

Obs 5: The drawer 1 is closed.

Act 6: open drawer 1

Obs 6: You open drawer 1. It is empty.

Act 7: put peppershaker 1 in/on drawer 1

Obs 7: You put the peppershaker 1 in/on the drawer 1.The thoughts decompose the goal (find pepper shaker, then put it in drawer), apply commonsense knowledge (likely locations), and track progress. The result: a direct, efficient trajectory that succeeds.

Recall: Name two specific failure modes of Act-only agents identified in this lesson. Which ALFWorld task feature makes goal decomposition particularly important?

Apply: Consider a WebShop task where the user instruction is “Find a nightstand with drawers, nickel finish, under $140.” Write an Act-only sequence of 4 actions (search, click, etc.) that might fail, and explain where explicit reasoning would help the agent make a better decision.

Extend: The Act-only agent in the ALFWorld example repeated the same failed action twice. This “repetitive loop” problem also affects ReAct, though less frequently. Why might an autoregressive language model get stuck in such loops? Think about how the model generates text and what happens when the most likely next token is the same action it just produced.

This is the paper’s key contribution. ReAct takes the agent-environment framework from Lesson 1, adds chain-of-thought reasoning from Lesson 2, and creates something more powerful than either alone. The idea is formally simple: let the agent “think out loud” as one of its available actions.

Return to the librarian from Lesson 1. Now instead of silently walking between shelves, she narrates her reasoning: “I need to find who directed Inception. Let me search for Inception.” She searches. “The observation says Christopher Nolan directed it. Now I need his birth year. Let me search Christopher Nolan.” She searches again. “Born 1970. Now I need the lead actor…” Each thought is spoken aloud, planning the next action or extracting key information from what she just observed.

Figure 1: Four prompting paradigms compared. Standard prompting (a) jumps from question to answer. Chain-of-thought (b) adds reasoning steps but stays inside the model’s head. Act-only (c) takes actions but never pauses to think. ReAct (d) interleaves thoughts and actions, grounding reasoning in real observations.

The formal innovation is a single equation. ReAct augments the action space with language:

\[\hat{\mathcal{A}} = \mathcal{A} \cup \mathcal{L}\]

search,

lookup, finish)When the agent selects an action from \(\mathcal{A}\) (a real action), the environment responds with an observation. When the agent selects an action from \(\mathcal{L}\) (a thought), nothing happens in the environment – no observation is returned. Instead, the thought is appended to the context:

\[c_{t+1} = (c_t, \hat{a}_t) \quad \text{where } \hat{a}_t \in \mathcal{L}\]

The thought becomes part of the text that the model conditions on when generating future actions. This is how the agent “remembers” its reasoning – it writes it into the context.

Why this is powerful: thoughts serve multiple purposes that neither reasoning-only nor acting-only methods can achieve:

How it is implemented: ReAct requires no model training and no new architecture. It works purely through prompting. The authors write a small number of example trajectories (3 to 6) showing interleaved thoughts, actions, and observations for representative problems. These trajectories serve as few-shot exemplars in the prompt. The model generates its own trajectories by mimicking the pattern.

Two trajectory patterns are used:

Let’s build a ReAct trajectory from scratch for a concrete HotpotQA question: “Were Scott Derrickson and Ed Wood of the same nationality?”

Step 1: Define the action space. \(\mathcal{A} = \{\text{search[entity]}, \text{lookup[string]}, \text{finish[answer]}\}\)

Step 2: Generate a trajectory with interleaved thoughts and actions.

Thought 1: I need to determine the nationalities of Scott

Derrickson and Ed Wood. Let me search Scott

Derrickson first.

Action 1: search[Scott Derrickson]

Obs 1: Scott Derrickson (born July 16, 1966) is an

American filmmaker. He is best known for directing

horror films...

Thought 2: Scott Derrickson is American. Now I need to find

Ed Wood's nationality.

Action 2: search[Ed Wood]

Obs 2: Edward Davis Wood Jr. (October 10, 1924 --

December 10, 1978) was an American filmmaker and

actor...

Thought 3: Ed Wood was also American. Both Scott Derrickson

and Ed Wood are American, so they have the same

nationality.

Action 3: finish[yes]Notice the strict alternation: Thought-Action-Observation, Thought-Action-Observation, Thought-Action. Each thought serves a specific purpose:

Step 3: Trace the context updates.

After Thought 1 (a language action \(\hat{a}_1 \in \mathcal{L}\)), the context grows by one entry but no observation is returned. The environment does not “see” the thought. After Action 1 (a real action \(a_1 \in \mathcal{A}\)), the environment returns Obs 1. The context at step 2 is: \(c_2 = (\text{Question}, \hat{a}_1, a_1, o_1)\).

Recall: What is the formal definition of the augmented action space? What happens when the agent selects an action from \(\mathcal{L}\) versus \(\mathcal{A}\)?

Apply: Write a ReAct trajectory (thoughts, actions,

observations) for the FEVER claim: “The Colosseum is located in Athens.”

Use the action space {search[entity],

lookup[string],

finish[SUPPORTS/REFUTES/NOT ENOUGH INFO]}. Include at least

two thought steps.

Extend: The paper says that for decision-making tasks (like ALFWorld), thoughts appear sparsely rather than before every action. Why would inserting a thought before every single action hurt performance in a task that might require 50 actions? Think about the context window and the signal-to-noise ratio.

ReAct is a prompting paradigm – its effectiveness depends entirely on the quality of the few-shot exemplars. This lesson teaches how to design ReAct prompts for different task types, covering the practical decisions that determine whether the approach works.

Think of writing a recipe for someone who has never cooked. You would not just list ingredients and say “make soup.” You would show them a complete example: “Here’s how I made chicken soup. First, I thought about what ingredients I needed. Then I chopped the vegetables. I noticed the onion was soft, so I decided to add it last. Then I boiled water…” The recipe shows both the actions (chop, boil) and the thinking (deciding order, adapting to ingredient quality).

ReAct prompts work the same way. Each exemplar is a complete trajectory showing a human solving a representative problem, with explicit thoughts, actions, and the environment’s responses. The model learns the pattern from these 3-6 examples and applies it to new problems.

Prompt structure for knowledge-intensive tasks (HotpotQA, FEVER):

The paper uses dense thoughts – every action is preceded by a thought. A single exemplar looks like this:

Question: <question text>

Thought 1: <decompose the question, plan first action>

Action 1: search[<entity>]

Observation 1: <environment response>

Thought 2: <extract key info, plan next action>

Action 2: search[<entity>] or lookup[<string>]

Observation 2: <environment response>

...

Thought N: <synthesize findings into answer>

Action N: finish[<answer>]The paper uses 6 exemplars for HotpotQA and 3 for FEVER. Adding more exemplars did not improve performance.

Prompt structure for decision-making tasks (ALFWorld, WebShop):

The paper uses sparse thoughts – thoughts appear only at key decision points. For ALFWorld:

<task description and initial observation>

Act 1: Think[<decompose goal, plan strategy using

commonsense knowledge>]

Act 2: go to <location>

Obs 2: <what the agent sees>

Act 3: take <object> from <location>

Obs 3: <confirmation>

...

Act K: Think[<track progress, plan next subgoal>]

...The paper uses 2-3 exemplars per task type for ALFWorld and 1 exemplar for WebShop.

What makes a good thought? The paper identifies six types of useful thoughts, each serving a distinct purpose:

| Thought Type | Example | Purpose |

|---|---|---|

| Goal decomposition | “I need to search X, find Y, then find Z” | Break complex goal into subgoals |

| Information extraction | “X was started in 1844” | Distill key facts from observations |

| Commonsense reasoning | “X is not Y, so Z must be…” | Apply world knowledge |

| Arithmetic reasoning | “1844 < 1989” | Compute comparisons |

| Search reformulation | “Maybe I can search X instead” | Adapt after failed retrieval |

| Answer synthesis | “So the answer is X” | Combine retrieved facts |

Let’s design a complete 2-shot ReAct prompt for FEVER (fact verification). Each exemplar must show a trajectory that verifies or refutes a claim.

Exemplar 1 (claim that is SUPPORTS):

Claim: The Statue of Liberty was a gift from France.

Thought 1: I need to verify whether the Statue of Liberty

was a gift from France. Let me search for it.

Action 1: search[Statue of Liberty]

Observation 1: The Statue of Liberty is a colossal

neoclassical sculpture on Liberty Island in

New York Harbor. The copper statue, a gift

from the people of France, was designed by

French sculptor Frederic Auguste Bartholdi...

Thought 2: The observation confirms that the Statue of

Liberty was "a gift from the people of France."

This supports the claim.

Action 2: finish[SUPPORTS]Exemplar 2 (claim that is REFUTES):

Claim: The Great Wall of China was built in the 20th

century.

Thought 1: I need to check when the Great Wall of China

was built. Let me search for it.

Action 1: search[Great Wall of China]

Observation 1: The Great Wall of China is a series of

fortifications that were built across the

historical northern borders of ancient

Chinese states... built over many centuries,

beginning as early as the 7th century BC...

Thought 2: The observation says construction began as early

as the 7th century BC, not the 20th century.

This refutes the claim.

Action 2: finish[REFUTES]Test claim (appended after the exemplars):

Claim: Mount Everest is located in Africa.

Thought 1:The model would continue from here, generating its own trajectory that follows the pattern it learned from the two exemplars.

Notice how each exemplar demonstrates all the key behaviors: decompose the task (what do I need to verify?), take a targeted action (search the subject), extract the relevant fact from the observation, and reason about whether it supports or refutes the claim.

Recall: How many exemplars does the paper use for HotpotQA and FEVER? What is the difference between “dense thought” and “sparse thought” trajectory patterns?

Apply: Write one ReAct exemplar for a new task: verifying whether a movie won a specific award. The claim is “Parasite won the Academy Award for Best Picture.” Design the trajectory with thoughts, search actions, and observations. Make the observations realistic (what Wikipedia would actually return).

Extend: The paper claims that prompt design is “intuitive and easy,” but critics note that the quality and placement of thoughts in exemplars directly affect performance. Consider two versions of Thought 1 for a HotpotQA question: (A) “I need to search for information.” (B) “I need to search Apple Remote and find the program it was originally designed to interact with.” Which is more likely to produce effective model behavior, and why?

This is the payoff lesson. Now that you understand how ReAct works and how to design prompts for it, we examine what happens when ReAct meets real benchmarks – and discover that the most powerful approach combines ReAct with chain-of-thought reasoning.

No single method wins everywhere. ReAct’s strength – grounding reasoning in real information – comes at a cost: the structural constraint of alternating thoughts and actions reduces reasoning flexibility, and the method is only as good as its retrieval. The paper’s most important practical finding is that combining ReAct with CoT-SC (chain-of-thought self-consistency) outperforms either method alone.

Knowledge-intensive reasoning results (PaLM-540B):

| Method | HotpotQA (EM – exact match, the fraction of predictions that match the ground truth answer exactly) | FEVER (Accuracy) |

|---|---|---|

| Standard prompting | 28.7 | 57.1 |

| CoT | 29.4 | 56.3 |

| CoT-SC (21 samples) | 33.4 | 60.4 |

| Act only | 25.7 | 58.9 |

| ReAct | 27.4 | 60.9 |

| CoT-SC \(\to\) ReAct | 34.2 | 64.6 |

| ReAct \(\to\) CoT-SC | 35.1 | 62.0 |

Several observations:

ReAct beats CoT on FEVER (60.9 vs. 56.3) because fact verification requires checking claims against actual evidence. When the model must verify “X is true,” looking it up beats guessing from memory.

ReAct slightly trails CoT on HotpotQA (27.4 vs. 29.4). The structural constraint of alternating thoughts and actions reduces reasoning flexibility. CoT can reason more freely without waiting for observations.

Hybrid strategies win by a large margin. ReAct \(\to\) CoT-SC (try ReAct first, fall back to CoT-SC if ReAct fails within a step limit) achieves 35.1 on HotpotQA. CoT-SC \(\to\) ReAct (try CoT-SC first, fall back to ReAct when the majority vote is not confident) achieves 64.6 on FEVER.

The hybrid strategies work because the two methods have complementary failure modes. CoT fails through hallucination (56% of errors). ReAct fails through bad retrieval (23% of errors). When one fails, the other often succeeds.

How the hybrid strategies work:

Interactive decision-making results:

| Method | ALFWorld (Success %) | WebShop (Score / SR) |

|---|---|---|

| Act only (best of 6) | 45 | 62.3 / 30.1 |

| ReAct (best of 6) | 71 | 66.6 / 40.0 |

| BUTLER (imitation learning – training a model to mimic expert demonstrations) | 37 | – |

| IL + RL (imitation learning + reinforcement learning) | – | 62.4 / 28.7 |

On ALFWorld, ReAct nearly doubled Act-only’s performance (71% vs. 45%) and almost doubled BUTLER (71% vs. 37%) – a system trained on 100,000 expert trajectories. ReAct achieved this with just 2-3 in-context examples per task type. Even ReAct’s worst trial (48%) beat both baselines’ best trials.

Figure 3: Scaling results for prompting (left) and fine-tuning (right) on HotpotQA. Fine-tuned ReAct at smaller model sizes outperforms prompting-based methods at much larger scales, demonstrating that learning to retrieve and reason is a more scalable skill than learning to memorize facts.

Fine-tuning scaling: when fine-tuned on 3,000 trajectories, ReAct showed the strongest scaling behavior. Fine-tuned PaLM-8B with ReAct outperformed all PaLM-62B prompting methods. Fine-tuning CoT or standard prompting essentially teaches models to memorize facts. Fine-tuning ReAct teaches models how to retrieve and reason – a more generalizable skill.

Let’s walk through the decision logic for the hybrid strategy CoT-SC \(\to\) ReAct on a specific FEVER claim: “The capital of Australia is Sydney.”

Step 1: Run CoT-SC with \(n = 7\) samples.

| Sample | CoT reasoning (abbreviated) | Answer |

|---|---|---|

| 1 | “Sydney is the largest city in Australia and is often considered the capital…” | SUPPORTS |

| 2 | “The capital of Australia is Canberra, not Sydney…” | REFUTES |

| 3 | “Sydney is the most well-known Australian city…” | SUPPORTS |

| 4 | “Australia’s capital is Canberra, which was purpose-built…” | REFUTES |

| 5 | “Sydney, being the largest city, is commonly mistaken for the capital…” | REFUTES |

| 6 | “The capital of Australia is Canberra…” | REFUTES |

| 7 | “Many people think Sydney is the capital, but it is actually Canberra…” | REFUTES |

Majority vote: REFUTES (5 out of 7). Since \(5 > 7/2 = 3.5\), the majority is confident. Return REFUTES.

Now consider a harder claim: “The Rhone glacier is the source of the Rhone river.”

| Sample | Answer |

|---|---|

| 1 | SUPPORTS |

| 2 | NOT ENOUGH INFO |

| 3 | SUPPORTS |

| 4 | REFUTES |

| 5 | SUPPORTS |

| 6 | NOT ENOUGH INFO |

| 7 | SUPPORTS |

Majority vote: SUPPORTS (4 out of 7). Since \(4 > 3.5\) but just barely – actually, \(4 > 3.5\), so this would pass the threshold. Let’s say \(n = 21\) and only 8 out of 21 say SUPPORTS (\(8 < 10.5\)). The majority is not confident. Fall back to ReAct:

Thought 1: I need to verify if the Rhone glacier is the

source of the Rhone river. Let me search.

Action 1: search[Rhone river]

Obs 1: The Rhone is a major European river, arising in

the Rhone Glacier in the Swiss canton of Valais...

Thought 2: The observation confirms that the Rhone river

arises from the Rhone Glacier. The claim is

supported.

Action 2: finish[SUPPORTS]ReAct succeeds because it retrieves the actual fact instead of relying on uncertain internal knowledge.

Recall: Why does ReAct outperform CoT on FEVER but slightly underperform on HotpotQA? What is the key difference between these two tasks that explains this?

Apply: Given these CoT-SC results for the claim “Pluto is the largest dwarf planet in the solar system” with \(n = 7\): SUPPORTS (3), REFUTES (3), NOT ENOUGH INFO (1). The majority count for the top answer is 3, which is less than \(7/2 = 3.5\). According to the CoT-SC \(\to\) ReAct strategy, what happens next? Write the first two thought-action pairs of the ReAct fallback trajectory.

Extend: The paper shows that fine-tuned ReAct scales better than fine-tuned CoT. The authors argue this is because ReAct teaches models “how to retrieve and reason” rather than “how to memorize facts.” Consider a future scenario where a model is fine-tuned with 100,000 ReAct trajectories covering many domains. What kind of skill would this model acquire that a model fine-tuned on 100,000 CoT trajectories would not? Is there a limit to how much better retrieval-based reasoning can scale compared to memory-based reasoning?

The augmented action space \(\hat{\mathcal{A}} = \mathcal{A} \cup \mathcal{L}\) is the paper’s entire formal contribution expressed in one line. Explain in your own words why taking the union of concrete actions and language strings is sufficient to enable the synergy between reasoning and acting. What property of large language models makes this work?

ReAct uses different trajectory patterns for different task types: dense thoughts for QA/fact-verification and sparse thoughts for interactive decision-making. Why is this distinction necessary? What would go wrong if you used dense thoughts (a thought before every action) in a task like ALFWorld that might require 50 actions?

The paper reports that hallucination caused 0% of ReAct’s failures on HotpotQA, compared to 56% of CoT’s failures. Does this mean ReAct never produces incorrect information? If not, what is the actual mechanism that prevents hallucination, and what new failure mode replaces it?

ReAct requires human-written trajectory exemplars for each new task domain. The paper uses 3-6 exemplars and claims this is “intuitive and easy to design.” Evaluate this claim critically: what expertise is needed to write effective ReAct trajectories, and how sensitive is performance to the quality of these exemplars?

Compare ReAct’s approach to grounding with RAG’s approach (see Retrieval-Augmented Generation). RAG retrieves documents once before generation; ReAct retrieves iteratively through a reasoning loop. In what situations would the single-retrieval RAG approach be sufficient, and when would you need ReAct’s iterative approach?

Build a ReAct agent simulator that interleaves reasoning (thoughts) and acting (lookups in a knowledge base) to answer multi-hop questions, demonstrating why the combined approach outperforms reasoning-only and acting-only strategies.

Implement three agent strategies for answering multi-hop questions using a toy knowledge base:

Each question requires combining two facts from the knowledge base. The CoT-only agent has access to 60% of the KB (simulating imperfect pretraining knowledge), so it hallucinates when a needed fact is missing. The Act-only agent can retrieve any fact but struggles to combine them. The ReAct agent can do both.

import numpy as np

# === Knowledge base ===

# Each entry maps an entity to a dict of facts.

KNOWLEDGE_BASE = {

"Eiffel Tower": {"location": "Paris", "height_m": 330,

"architect": "Gustave Eiffel", "year": 1889},

"Gustave Eiffel": {"nationality": "French",

"birth_year": 1832, "profession": "engineer"},

"Statue of Liberty": {"location": "New York City", "height_m": 93,

"sculptor": "Frederic Auguste Bartholdi",

"year": 1886},

"Frederic Auguste Bartholdi": {"nationality": "French",

"birth_year": 1834,

"profession": "sculptor"},

"Colosseum": {"location": "Rome", "year": 80,

"purpose": "amphitheater",

"commissioned_by": "Emperor Vespasian"},

"Emperor Vespasian": {"nationality": "Roman",

"birth_year": 9, "reign_start": 69},

"Big Ben": {"location": "London", "height_m": 96,

"architect": "Augustus Pugin", "year": 1859},

"Augustus Pugin": {"nationality": "English",

"birth_year": 1812,

"profession": "architect"},

"Great Wall of China": {"location": "China",

"length_km": 21196,

"purpose": "fortification",

"start_year": -700},

"Taj Mahal": {"location": "Agra", "year": 1653,

"architect": "Ustad Ahmad Lahauri",

"commissioned_by": "Shah Jahan"},

"Ustad Ahmad Lahauri": {"nationality": "Mughal",

"birth_year": 1580,

"profession": "architect"},

"Shah Jahan": {"nationality": "Mughal", "birth_year": 1592,

"reign_start": 1628},

}

# Multi-hop questions: each requires looking up 2 entities

# and combining facts to answer.

QUESTIONS = [

{

"question": "Was the architect of the Eiffel Tower born before "

"the sculptor of the Statue of Liberty?",

"hops": [("Eiffel Tower", "architect"),

("Gustave Eiffel", "birth_year"),

("Statue of Liberty", "sculptor"),

("Frederic Auguste Bartholdi", "birth_year")],

"answer": True, # 1832 < 1834

"reasoning": "Gustave Eiffel (born 1832) < "

"Frederic Auguste Bartholdi (born 1834)"

},

{

"question": "Is the Eiffel Tower more than twice as tall "

"as the Statue of Liberty?",

"hops": [("Eiffel Tower", "height_m"),

("Statue of Liberty", "height_m")],

"answer": True, # 330 > 2 * 93 = 186

"reasoning": "330 > 2 * 93 = 186"

},

{

"question": "Was the Colosseum built before Big Ben?",

"hops": [("Colosseum", "year"), ("Big Ben", "year")],

"answer": True, # 80 < 1859

"reasoning": "80 AD < 1859 AD"

},

{

"question": "Was the architect of Big Ben born before the "

"architect of the Eiffel Tower?",

"hops": [("Big Ben", "architect"),

("Augustus Pugin", "birth_year"),

("Eiffel Tower", "architect"),

("Gustave Eiffel", "birth_year")],

"answer": True, # 1812 < 1832

"reasoning": "Augustus Pugin (born 1812) < "

"Gustave Eiffel (born 1832)"

},

{

"question": "Was the Taj Mahal built before the Statue "

"of Liberty?",

"hops": [("Taj Mahal", "year"),

("Statue of Liberty", "year")],

"answer": True, # 1653 < 1886

"reasoning": "1653 < 1886"

},

{

"question": "Was the person who commissioned the Taj Mahal "

"born before the person who commissioned the "

"Colosseum?",

"hops": [("Taj Mahal", "commissioned_by"),

("Shah Jahan", "birth_year"),

("Colosseum", "commissioned_by"),

("Emperor Vespasian", "birth_year")],

"answer": False, # 1592 > 9

"reasoning": "Shah Jahan (born 1592) > "

"Emperor Vespasian (born 9 AD)"

},

]

def lookup(entity, fact_key):

"""

Look up a fact about an entity in the knowledge base.

Returns the fact value if found, or None if not found.

"""

if entity in KNOWLEDGE_BASE and fact_key in KNOWLEDGE_BASE[entity]:

return KNOWLEDGE_BASE[entity][fact_key]

return None

def make_partial_kb(rng, fraction=0.6):

"""

Create a partial knowledge base simulating imperfect

pretraining knowledge. Randomly includes `fraction` of

all entity-fact pairs.

Returns: dict with same structure as KNOWLEDGE_BASE but

with some facts missing.

"""

partial = {}

for entity, facts in KNOWLEDGE_BASE.items():

partial[entity] = {}

for key, value in facts.items():

if rng.random() < fraction:

partial[entity][key] = value

return partial

def cot_agent(question_data, partial_kb, rng):

"""

Chain-of-thought agent: reasons using only internal

knowledge (partial_kb). Cannot look up facts.

TODO:

1. For each hop in question_data["hops"], check if the

fact exists in partial_kb.

2. If a needed fact is missing, "hallucinate" by generating

a plausible but potentially wrong value:

- For birth_year: random int between 1500 and 1900

- For height_m: random int between 50 and 500

- For year: random int between 100 and 1900

- For string facts (architect, sculptor, etc.): return

"Unknown Person"

3. After gathering all facts (real or hallucinated), apply

the comparison logic from the question type.

4. Return (answer: bool, trace: str) where trace shows the

reasoning chain.

"""

# TODO: implement

pass

def act_agent(question_data, rng):

"""

Act-only agent: can look up facts but does not reason

explicitly. Retrieves all needed facts, then must produce

an answer directly.

The "failure mode" of act-only: even with correct facts

retrieved, the agent sometimes applies the wrong comparison

operation (simulating the inability to synthesize without

reasoning).

TODO:

1. For each hop, call lookup() to retrieve the fact.

2. If any lookup returns None, return (None, trace) --

the agent cannot recover from missing info.

3. Collect the two final numeric values that need comparing.

4. Compare them. The act-only agent gets the comparison

direction wrong 30% of the time (use rng to simulate

this) -- it retrieves the right facts but without

explicit reasoning, sometimes compares in the wrong

direction.

5. Return (answer: bool, trace: str) where trace shows

only actions and observations (no reasoning).

"""

# TODO: implement

pass

def react_agent(question_data):

"""

ReAct agent: interleaves thoughts and actions. Can look

up facts AND reason about them explicitly.

TODO:

1. Generate Thought 1: decompose the question into subgoals.

2. For each hop, generate an Action (lookup) and an

Observation (the result).

3. After each observation, generate a Thought that extracts

the key information.

4. Generate a final Thought that synthesizes the answer by

explicitly comparing the two values.

5. Return (answer: bool, trace: str) showing the full

interleaved trajectory.

"""

# TODO: implement

pass

def evaluate_agents(n_trials=100):

"""

Run all three agents on all questions over multiple trials

and report accuracy for each.

TODO:

1. For each trial, create a fresh partial_kb for the CoT

agent (simulating different "pretraining" knowledge).

2. Run each agent on each question.

3. Track correct answers.

4. Print results comparing the three strategies.

"""

rng = np.random.default_rng(42)

n_questions = len(QUESTIONS)

cot_correct = np.zeros(n_questions)

act_correct = np.zeros(n_questions)

react_correct = np.zeros(n_questions)

for trial in range(n_trials):

partial_kb = make_partial_kb(rng, fraction=0.6)

for i, q in enumerate(QUESTIONS):

# CoT agent

cot_ans, _ = cot_agent(q, partial_kb, rng)

if cot_ans == q["answer"]:

cot_correct[i] += 1

# Act agent (needs rng for synthesis errors)

act_ans, _ = act_agent(q, rng)

if act_ans == q["answer"]:

act_correct[i] += 1

# ReAct agent (always correct with full KB access

# and explicit reasoning)

react_ans, _ = react_agent(q)

if react_ans == q["answer"]:

react_correct[i] += 1

# Print results

print("Agent Comparison: Multi-Hop Question Answering")

print("=" * 60)

print(f"{'Question':<6}{'CoT':<12}{'Act-only':<12}"

f"{'ReAct':<12}")

print("-" * 60)

for i, q in enumerate(QUESTIONS):

cot_acc = cot_correct[i] / n_trials

act_acc = act_correct[i] / n_trials

react_acc = react_correct[i] / n_trials

print(f"Q{i+1:<5}{cot_acc:<12.0%}{act_acc:<12.0%}"

f"{react_acc:<12.0%}")

print("-" * 60)

cot_avg = cot_correct.sum() / (n_trials * n_questions)

act_avg = act_correct.sum() / (n_trials * n_questions)

react_avg = react_correct.sum() / (n_trials * n_questions)

print(f"{'Avg':<6}{cot_avg:<12.0%}{act_avg:<12.0%}"

f"{react_avg:<12.0%}")

# Show example traces

print("\n" + "=" * 60)

print("Example traces for Q1:")

print("=" * 60)

partial_kb = make_partial_kb(rng, fraction=0.6)

_, cot_trace = cot_agent(QUESTIONS[0], partial_kb, rng)

_, act_trace = act_agent(QUESTIONS[0], rng)

_, react_trace = react_agent(QUESTIONS[0])

print("\n--- CoT Agent ---")

print(cot_trace)

print("\n--- Act-Only Agent ---")

print(act_trace)

print("\n--- ReAct Agent ---")

print(react_trace)

if __name__ == "__main__":

evaluate_agents()Agent Comparison: Multi-Hop Question Answering

============================================================

Question CoT Act-only ReAct

------------------------------------------------------------

Q1 62% 70% 100%

Q2 58% 71% 100%

Q3 73% 69% 100%

Q4 59% 72% 100%

Q5 76% 68% 100%

Q6 52% 70% 100%

------------------------------------------------------------

Avg 63% 70% 100%

============================================================

Example traces for Q1:

============================================================

--- CoT Agent ---

Thought: I need to find the architect of the Eiffel Tower

and the sculptor of the Statue of Liberty, then compare

birth years.

Eiffel Tower architect: Gustave Eiffel (from memory)

Gustave Eiffel birth year: 1832 (from memory)

Statue of Liberty sculptor: Frederic Auguste Bartholdi

(from memory)

Frederic Auguste Bartholdi birth year: 1702 (HALLUCINATED -

not in memory)

Comparison: 1832 > 1702, so answer is False

INCORRECT: hallucinated birth year led to wrong comparison

--- Act-Only Agent ---

Action: lookup(Eiffel Tower, architect) -> Gustave Eiffel

Action: lookup(Gustave Eiffel, birth_year) -> 1832

Action: lookup(Statue of Liberty, sculptor) ->

Frederic Auguste Bartholdi

Action: lookup(Frederic Auguste Bartholdi, birth_year) -> 1834

Answer: True

--- ReAct Agent ---

Thought 1: I need to compare birth years of the Eiffel

Tower's architect and the Statue of Liberty's sculptor.

Let me find the architect first.

Action 1: lookup(Eiffel Tower, architect) -> Gustave Eiffel

Thought 2: The architect is Gustave Eiffel. Now I need his

birth year.

Action 2: lookup(Gustave Eiffel, birth_year) -> 1832

Thought 3: Gustave Eiffel was born in 1832. Now I need the

sculptor of the Statue of Liberty.

Action 3: lookup(Statue of Liberty, sculptor) ->

Frederic Auguste Bartholdi

Thought 4: The sculptor is Frederic Auguste Bartholdi. Let

me find his birth year.

Action 4: lookup(Frederic Auguste Bartholdi, birth_year)

-> 1834

Thought 5: Gustave Eiffel (1832) was born before Frederic

Auguste Bartholdi (1834). 1832 < 1834, so the answer

is True.

Answer: TrueThe exact percentages will vary with the random seed, but the pattern is clear: CoT accuracy is lowest (~63%) due to hallucination from incomplete knowledge, Act-only is better (~70%) because it retrieves real facts but still fails when it must synthesize without reasoning, and ReAct achieves 100% by combining reliable retrieval with explicit reasoning. This mirrors the paper’s key finding: reasoning and acting together outperform either alone.